Azure Event Hub Overview

The fundamental of any business application is the communication between its processes. As a business application has different processes involved, it is necessary to choose the right form in which the communication is exchanged between them. The foremost action is to analyse whether the communication is via messages or events.

Going forward in this article we would focus on the Azure Event Hubs which defines the business workflow.

Next steps:

Messages Vs Events

A business application might opt for events for certain processes and move to message for other processes. To set the required communication, it is necessary to analyse the application’s architecture and its use cases.

Messages are raw data that toggles between the sender and receiver processes in the application. It contains the data itself, not just the reference. The sender process expects the receiver process to process the message content in a specific way.

Events are less complex than messages and frequently used for broadcast communications. It is a notification that indicates any occurrence or action that takes place in the process.

In general, the use of a message or event can be determined by answering the question “Does the sender expect any particular processing to be done in the communication by the receiver?”

If yes,then move to message or pick up an event if it is no.

Azure offers two categories based on the communication forms, message-based delivery and event-based communication. The message-based delivery includes,

- Azure Service Bus

- Azure Storage queues

Event-based communication technologies are,

- Event Grid

- Event Hub

What is Azure Event Hub used for?

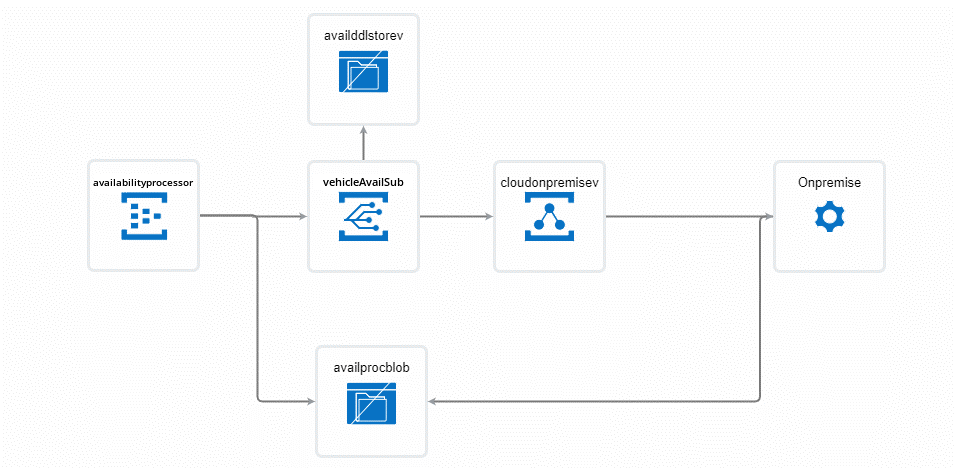

Azure Event hubs and Event Grid are used in business orchestrations for event storage and handling. Let’s take a simple Vehicle availability management scenario for better understanding.

In the orchestration above, the event hub capture feature automatically writes batches of captured events into the Azure Storage Blob containers and enables timely batch-oriented processing of events.

Azure Event Hubs emits an event to the Event Grid when the capture file is created. These events are not strongly correlated and don’t require processing in batches. Hence, Event Grid is selected to provide a reliable event delivery at massive scale.

Event Grid delivers the event to the Azure Relay which securely exposes the service that runs in the corporate network to the public cloud. Therefore, the actual business logic to process the telematics data in the storage blob container for decision-making analytics resides in the on-premise service.

Azure Event Grid Vs Event Hub

As discussed before, an event is a lightweight communication method. To make the best use of it Azure brings in two event-based technologies. Let’s see the contrasted capabilities showcased by the Event Grid and Event Hub with real-time scenarios that fit in.

Azure Event Hub

Azure Event Hub is a data ingestion service which streams a huge count of messages from any source to provide an immediate response to business challenges. The event Hub comes into play when handling of events along with data is required. Unlike Event Grids, Event Hubs perform certain additional tasks apart from just being an event broadcaster.

Azure Event Grid

Event Grid is an event-based technology which allows the publisher to inform the consumer regarding any status change. It is an event routing service. This service acts as a connector to tightly bind all the applications together and routes the event messages from any source to any destination.

Real time Scenario

Consider a complete e-commerce application where various processing scenarios like ordering, telemetry, shipping etc are compiled together. Every individual scenario will have a huge number of events and data streaming in it. Let us consider two scenarios which will make the best use of the Azure Event Hub and Event Grid.

Starting with telemetry scenario, where you want to capture the telemetry data flowing out from the application. In such cases to ingest the data emitted along with events processed it is wise to opt for an Event Hub.

While dealing with shipping and transporting, it is adequate to know the status of the action being performed. In any e-commerce application, it is must to keep the consumer notified whether the product has been manufactured, despatched and shipped. Event Grid plays a vital role in these cases, where a notification on an event is enough.

Event Grid is designed for one event at a time delivery and Event Hub is crafted to deliver a large stream of events along with data.

Next steps:

Concepts

Followed by the segregation in the form of communication it is vital to have a piece of knowledge on the terminologies and concepts related to it.

What is an Event Hub Namespace?

The namespace provides a dedicated scoping container, where you can frame multiple event hubs.

How event subscriptions in an Azure Event Hub Namespace work?

- Go to Azure Activity Log and export it to my preferred Event Hub.

- Select “+ Event Subscription” from the “Events” option in the Event Hub namespace.

- Specify that all events be captured, and that the endpoint be Storage Queue.

What is an Event Publisher?

Any resource that sends data to an event hub is a publisher. Events are published by using HTTPs or AMQP protocol. In case of low volume, publishing HTTPs will be of use but for high volume publishing, AMQP will be of best use as it inhibits better performance, latency and throughput. Event publishers will utilize the Shared Access Signature (SAS) token for identification. It can hold a unique identity or avail a common SAS token depending on the business requirements. Events can be published as a single event or grouped event, but a single publication’s maximum size limit is 265kb. On exceeding the maximum size limit the event would end up in an exception.

What is Event Hub Capture?

Event Hubs Capture permits automatic capturing of the data published by the Event Hubs and conserve it either in Blob Storage account or in an Azure Data Lake Service account. Capture can be enabled right from the Azure portal by defining the size and time interval to perform the capture action. By using the event hub capture the desired storage account or container to store the captured data can be specified. Event Hub capture reduces the complexity of loading the data and allows to focus on data processing.

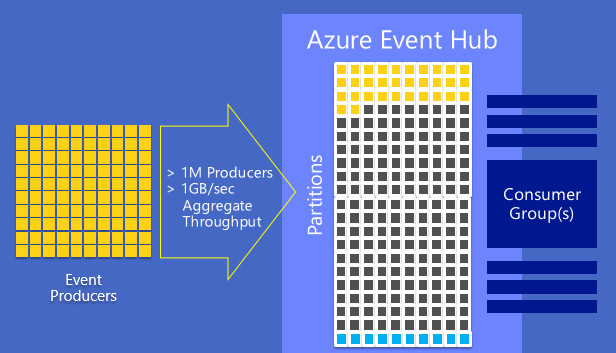

What is Event Hub Partitions?

Azure Event Hub splits up the streaming data into partitions in which the consumer or receiver will be restricted only to a specific subset or partition of the data. The storage and retrieval of the data are different using the partitions, unlike Service Bus queues and topics. In Service Bus queues and topics each consumer reads from the single queue lane. It is modelled based on “Competing Consumer” pattern where the single queue lane method will end up in scale limits. In contrast to the Service bus modelling, Azure Event Hubs is based on the “Partitioned Consumer” pattern where multiple lanes are assigned which boosts up the scaling.

Another added advantage in Azure Event Hub is, the data in the partition stays even after it is read by the consumer, whereas in Service Bus queues the message is removed once it is been read. The events in the partition cannot be removed manually it gets automatically deleted after the specified period expires.

What is SAS Tokens?

Shared Access Signature in a namespace is considered as an access token to grant limited access to the namespace. The access is purely based on the authorization rules, which are configured either on namespace or an entity. The authorization policy supports the following activities,

- Send - provides access to send messages to the application

- Listen - permits the listen or receive capability

- Manage – gives the right to manage the entity

What is Event Consumers?

Any application that read the data from the Azure Event Hub is a consumer. Multiple consumers can be allocated for the same event hub, they can read the same partition data at their tempo. Event hub consumers are connected through AMQP channels, this makes data availability easier for clients. This is significant for scalability and avoids unnecessary load on the application.

What is Consumer Groups?

Requirements vary depending on the consumer, to cope up with this, Azure Event Hub provides consumer groups. Consumer groups are the effortless view of the entire data in the event hub. Data in the event hub can be accessed only via consumer groups, partitions cannot be accessed directly. A default consumer group is created right at the stage of event hub creation.

Next steps:

The W’s of Azure Event Hubs

The W’s section emphasizes on three pillar actions “Why, When and Where” Azure Event Hubs comes into play.

Why?

- Azure Event Hubs allows you to raise a data pipeline capable of processing a huge number of events per second with low latency.

- It can process data from parallel sources and connect them to different infrastructures and services.

- It supports repeated replay of stored data.

When?

- To validate many publishers and to save the events in a Blob Storage or Data Lake.

- When you want to get timely insights on the business application.

- To obtain reliable messaging or flexibility for Big Data Applications.

- For seamless integration with data and analytics services to create big data pipeline.

Where?

- Anomaly Detection

- Application Logging

- Archiving data

- Telemetry processing

- Live Dashboarding

These 3 W’s will incorporate the need for an event hub and its necessity in any business application. It is a compilation of the characteristics /properties of an Azure Event Hub.

Process Events

Latest SDK

Event Hubs is developed across platforms with the support of various languages with a broad ecosystem available for the languages such as,

- .NET

- Java

- Python

- Node.js

- Go

With the support of these languages, you can easily start processing your stream from event hubs. All supported client languages provide low-level integration. To have a regular update on the Latest SDK’s for event processing please check out (Microsoft docs link).

Stream Analytics

Azure Event Hubs and Stream Analytics can provide an end-to-end solution for real-time analytics. Event Hubs can feed events into Azure in real-time, Stream Analytics jobs can process those events in real-time. For example, you can send web clicks, sensor readings, or online log events to Azure Event Hubs. You can then create Stream Analytics jobs to use Azure Event Hubs as the input data streams for real-time filtering, aggregating, and correlation.

Geo-Disaster Recovery

The downfall is a common pitch faced by any cloud provider. Similarly, Azure regions or datacentres encounters a downfall if no availability zones are used. Due to the fall in datacentres, data processing gets affected and switching the processing into a completely different region or datacentre seems critical. To overcome the disrupt Geo-disaster recovery and Geo-replication comes into action, which are important features for any enterprise. Azure Event hubs support both geo-disaster recovery and geo-replication at the same namespace level.

The Geo-disaster recovery is feasible only for standard and dedicated SKUs. The Geo-disaster recovery is feasible only for standard and dedicated SKUs.

Outages and Disasters

Outages are short term unavailability of Azure Event hubs and can affect some components or even the entire datacentre. For example, an outage can be a power failure or network issues in datacentre this does not bring in any loss of messages or events.

Disaster is a long-term loss of an event hub which might result in permanent loss of some messages or events. It is more like an earthquake or flood, the affected region or datacentre may or may not be unavailable or may be down for hours or days.

Key terms

The disaster recovery feature is reliant on primary and secondary recovery namespaces and imposes metadata disaster recovery.

Alias:

The name of the disaster recovery configuration that you set up. The connection is done through the alias and no connection string changes are required.

Primary Namespace:

Primary Namespace is one among the namespaces that correspond to the alias. It is “active” and receives messages.

Secondary Namespace:

In contrast with primary namespace, a secondary namespace is “passive” and does not receive messages.

Metadata:

Metadata is the replication of entities and their settings associated with the namespace. Messages and events are not replicated. The metadata between primary and secondary namespaces is in sync, so both can easily accept messages without the use of any application code or connection string changes.

Failover:

The process of initiating the secondary namespace.

Setup and failover flow

In setup, primary and secondary namespaces are created, and pairing occurs between them. The pairing results in an alias which replaces the use of connection string. Failover pairing can only accept new namespaces. Monitoring is required to detect if a failover is essential.

Certain steps are essential to initiate a failover,

- In the case of another outage, it is necessary to failover again. It is advisable to set up another passive namespace and update the pairing.

- Once the messages are recovered pull them from the primary namespace and use that namespace outside of the geo-recovery set up or delete the old primary namespace.

How do I get connection string for Event Hub?

- At the Azure Portal, on the left navigational menu, select All services.

- In the Analytics section, choose Event Hubs.

- Select your event hub from the list of event hubs.

- Select Shared Access Policies from the left menu on the Event Hubs Namespace page.

- From the list of policies, choose a shared access policy. RootManageSharedAccessPolicy is the default one. You can create a policy with the necessary rights (read, write) and utilize it.

- Access policies for Event Hubs are shared.

- Click the copy button next to the Connection string-primary key field.

Security

Security is one of the important parameters in any resource. Azure Event hubs provide security at two levels,

- Authorization

- Authentication

Authorization

Every request to a secure resource must be authorized to ensure that the client has required permissions to access the data. Azure Event Hubs provide few options for authorizing access through,

- Azure Active Directory

- Shared Access Signature

Authentication

Authentication is the process of validating and verifying the identity of a user or process. The user authentication ensures that the individual is recognised by the Azure platform. User verification can be done by,

- Authenticating with Azure Active Directory

- Authenticating with Shared Access Signature

Security Controls

Security control is a characteristic of an Azure resource that inculcates the ability to prevent, observe and respond to security defects. Azure Event Hubs possess few security controls in various perspectives,

| Network |

Service endpoint support Network isolation and fire walling support |

|

Monitoring and Logging |

Azure monitoring support Control and management plane logging and audit Data plane logging and audit |

| Identity |

Authentication Authorization |

| Data Protection |

Microsoft-managed keys Customer-managed keys Encryption in transit API calls encryption |

|

Configuration Management |

Configuration management support |

AMQP 1.0

Advanced Message Queuing Protocol 1.0 is a transfer protocol used to securely transfer messages between two bodies. It acts as the primary protocol for both service bus and event hubs, these resources also support HTTPS.

Next steps:

Manage Azure Event Hub in Azure Portal

Azure Event Hub is an ingestion service which deals with millions of events per second and managing such a massive count is a complex process. Azure comes up with effective event hub management capabilities like,

- Auto-Inflate

- Enable Capture

Auto-Inflate

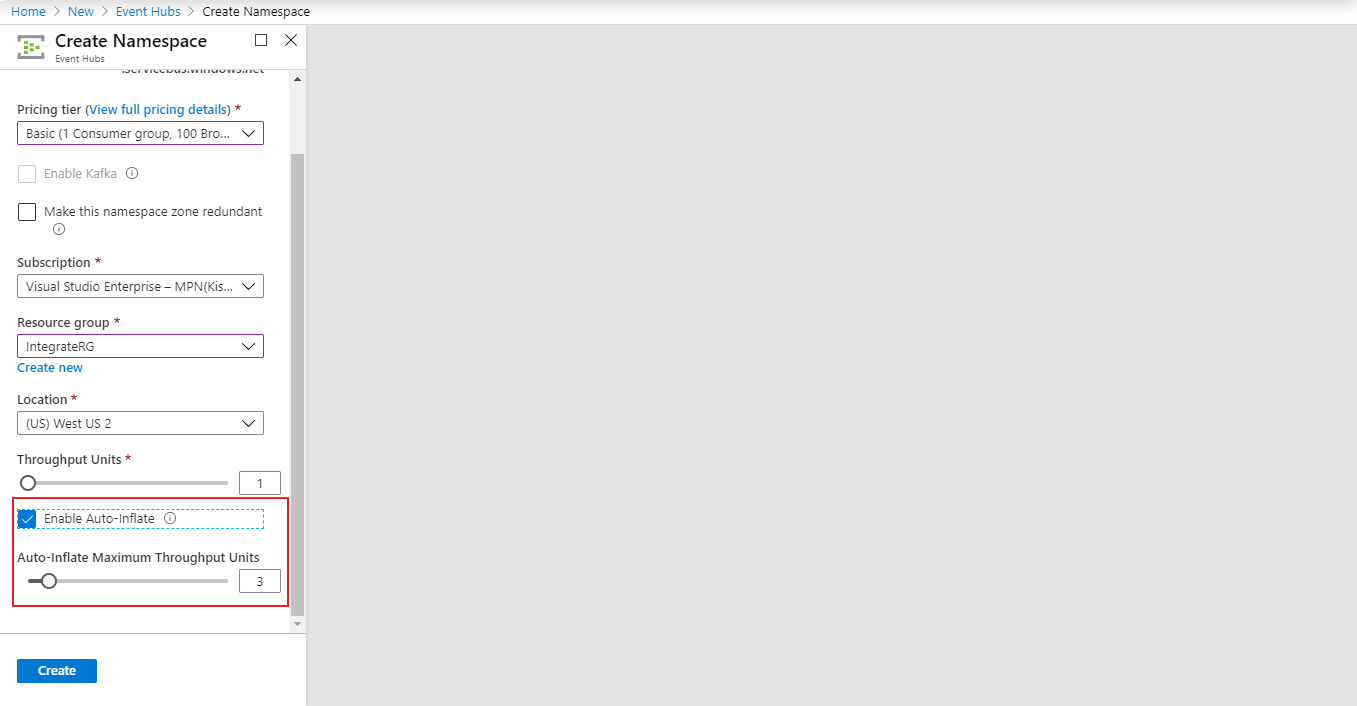

Let us consider a Power/Energy company, building an infrastructure where delivering the maximum throughput requested by the customer becomes critical. This can be rectified easily with the help of concepts like auto scaling, where the enough infrastructure can be ingested to the company and can be scaled down later.

How do you scale an Event Hub?

The two main factors which brings impact in scaling the Event Hubs are,

- Throughput units (standard tier) or processing units (premium tier)

- Partitions

What is Event Hub Throughput Units?

The volume of data in megabytes or the number (in thousands) of 1-KB events that ingress and egress through Event Hubs is referred to as throughput in Event Hubs. The throughput is measured in units of throughput (TUs). Before you can use the Event Hubs service, you must first purchase TUs. Using portal or Event Hubs Resource Manager templates, you can specify pick Event Hubs TUs.

What is ingress and egress in Event Hub?

Scaling of Azure Event Hubs is done with the Throughput Units which is the throughput capacity of the event hubs. A single throughput unit includes,

- Ingress: Up to 1 MB per second or 1000 events per second

- Egress: Up to 2 MB per second

These values of throughput units are adequate when there is predictable usage in the infrastructure. When the situation arises to have more data transfer through the Azure Event Hubs, customers usually increase the number of throughput units manually. This manual intervention can be neglected by using the Azure Event Hubs: Auto-Inflate functionality.

Azure Event Hubs: Auto Inflate is a cost-effective functionality offered by Microsoft that provides control based on the usage and demands without any changes in the existing setup. It enables users to automatically scale up throughput units to meet the demands.

Enabling Auto-Inflate

The Auto-Inflate functionality can be enabled during the deployment of event hub and the Auto-Inflate Maximum Throughput Units can also be determined.

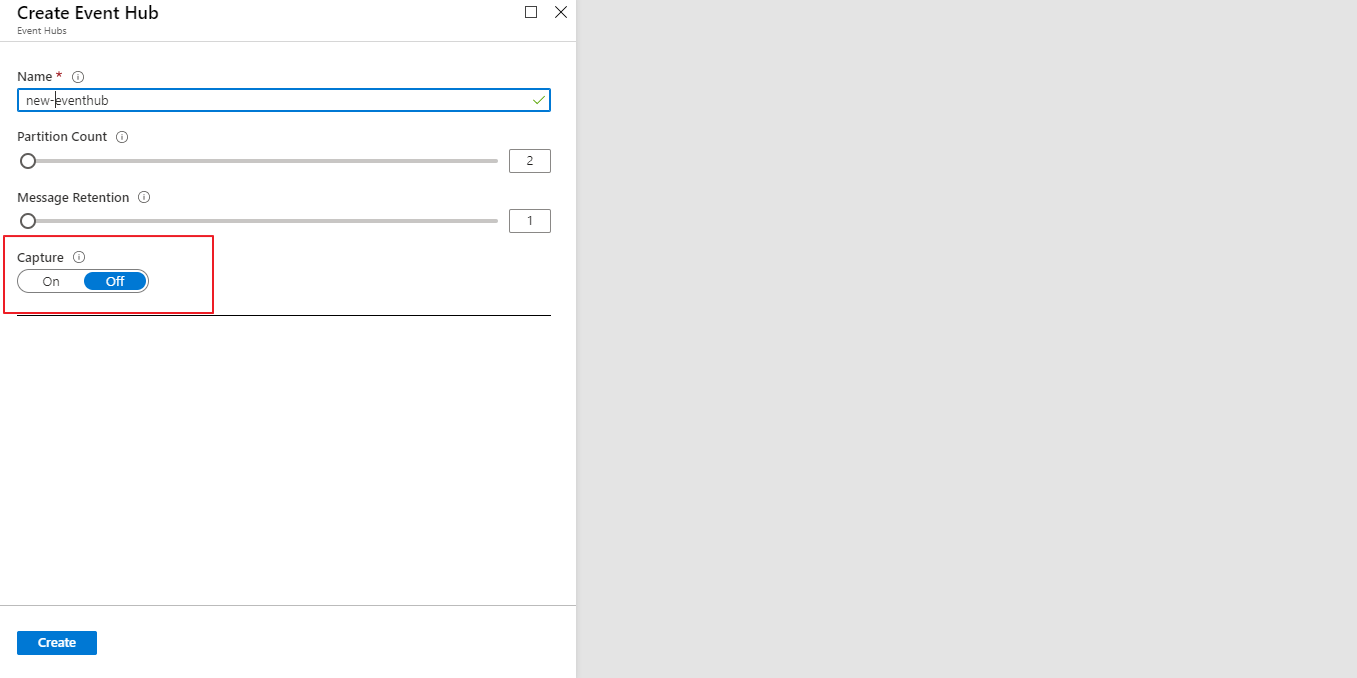

Enable capture

Where does Event Hub store data?

Capture can be enabled right during the creation of the event hub and the captured events are stored in the Azure Blob Storage or Azure Data Lake Store account. Initiating a capture is an easy process, it does not involve any administrative cost to run and scales paralelly with throughput units.

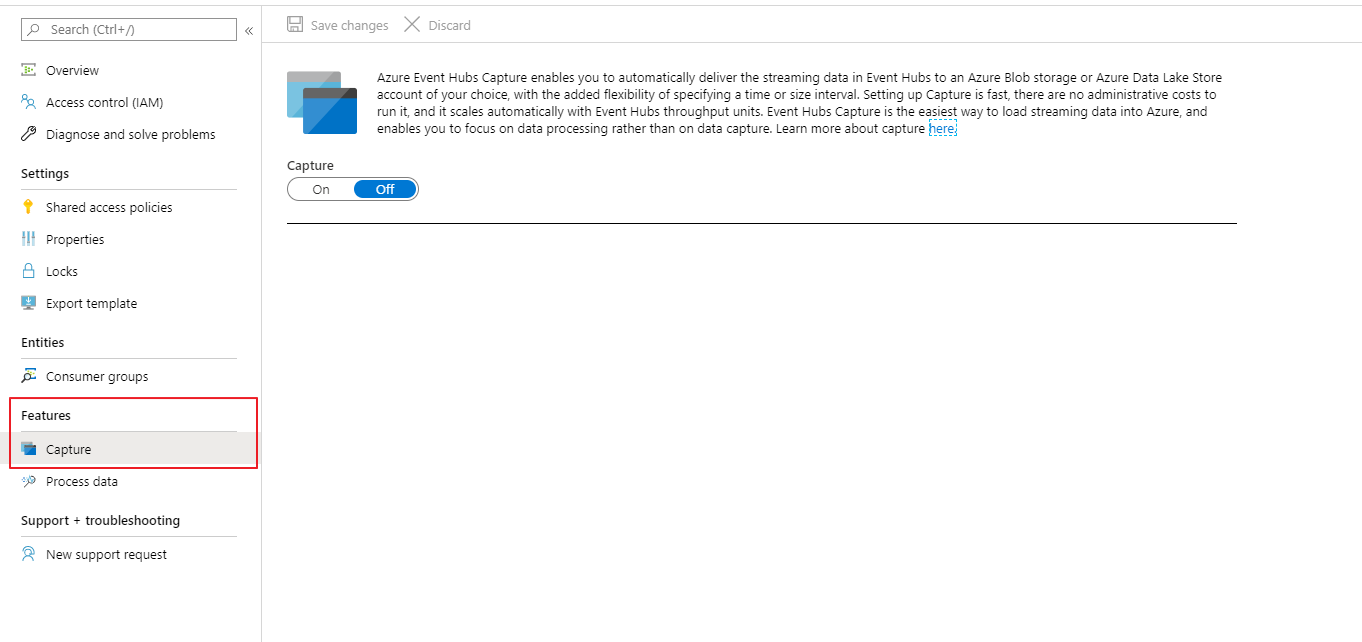

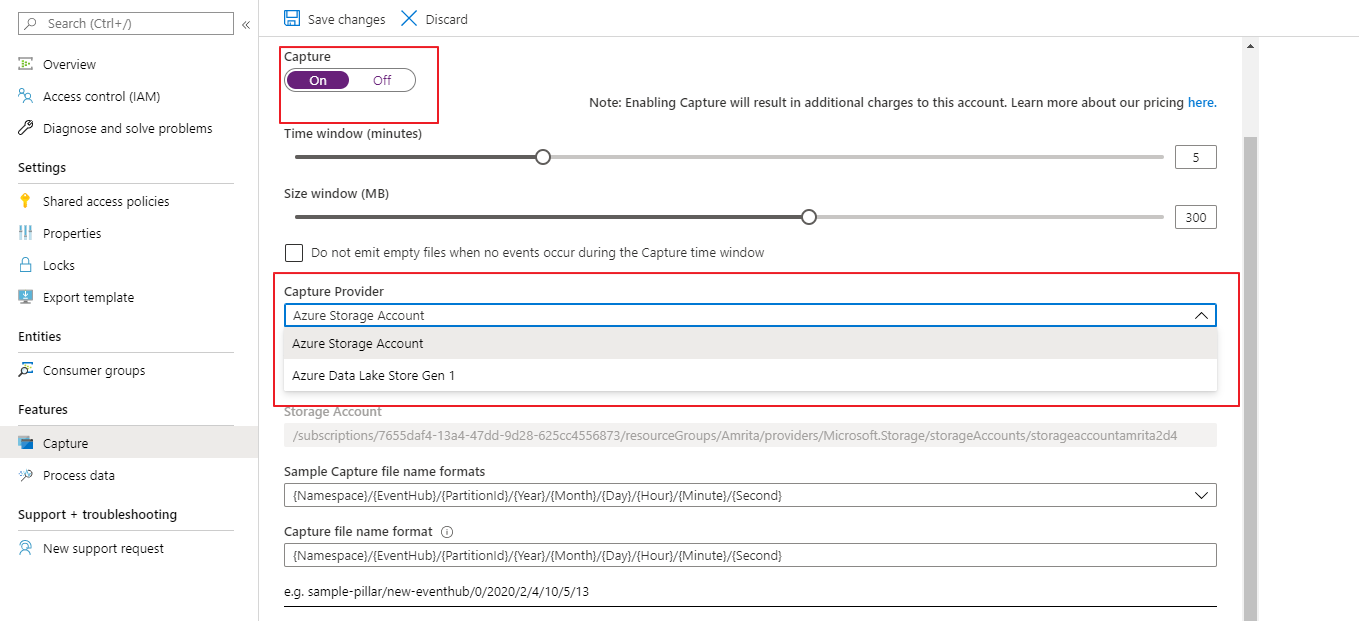

It is also possible to enable the capture from the “Capture” option available in the left blade under the Features section.

By enabling the capture option , a window appears where the storage details (Azure Storage Blob or Data Lake Store) are to be mentioned.

How do I read data from Azure Event Hub?

Data from the Azure Event hub can be received using an Event hub consumer. Multiple consumers can be allocated for the same event hub, they can read the same partition data at their tempo. Event hub consumers are connected through AMQP channels, this makes data availability easier for clients.

How do I connect to Event Hub?

Shared Access Policy can be used to connect an application with Azure Event hub. To obtain the Shared Access Keys, we can use the Azure portal or Azure CLI.

How do I create an Event Hub in Azure?

- Sign-in to the Azure Portal.

- On the portal, click +New–>Internet of Things–>Event Hubs.

- In the “Create Namespace” blade, enter the name of your Event Hub in the name field, then choose the Standard Pricing Tier, and choose the desired subscription to create the Event Hub under it.

How to send data to Azure Event Hub?

Follow the steps to send data to event hub via code,

- Create a console application in .NET Core.

- After you’ve finished the programme, you’ll need to establish a Class Library project to submit the data. The main project or the driver, on the other hand, merely invokes functions from that class library.

- Create a “Sender” class Library project.

- Make a new file called Sample.Sender.cs. Make a folder called Models. This folder will house the class model that we will use to map our data before sending it. Create a SampleData class in the Models folder.

- Now add a class to the main driver project named SenderHelper.cs.

- The Main method will utilise this class as a controller to deliver data to the Event Hub

- In the Program.cs code mention the number of messages you would like to send to the Event Hub.

How do I turn off an Azure Event Hub?

- Log in to the administrator interface for Azure Stack Hub.

- On the left, click Marketplace Management.

- Providers of resources should be chosen.

- From the list of resource providers, choose Event Hubs. You can narrow down the results by typing “Event Hubs” into the search box given.

- Choose Uninstall from the drop-down menus at the top of the page.

- Select Uninstall after entering the resource provider’s name.

Monitor Azure Event Hub in Azure Portal

Azure Event Hubs can be monitored by providing access to certain event hub metrics in the Azure monitor. The overall status of the event hub can be assessed at the namespace level and in entity level. This is done by the valuable set of metrics data possessed by the Azure Monitor.

Azure Monitor

Azure services are monitored with the help of combined user interfaces provided by the Azure Monitor. Here’s How Turbo360 complements to Azure Monitor, Compare Turbo360 vs Azure Monitor

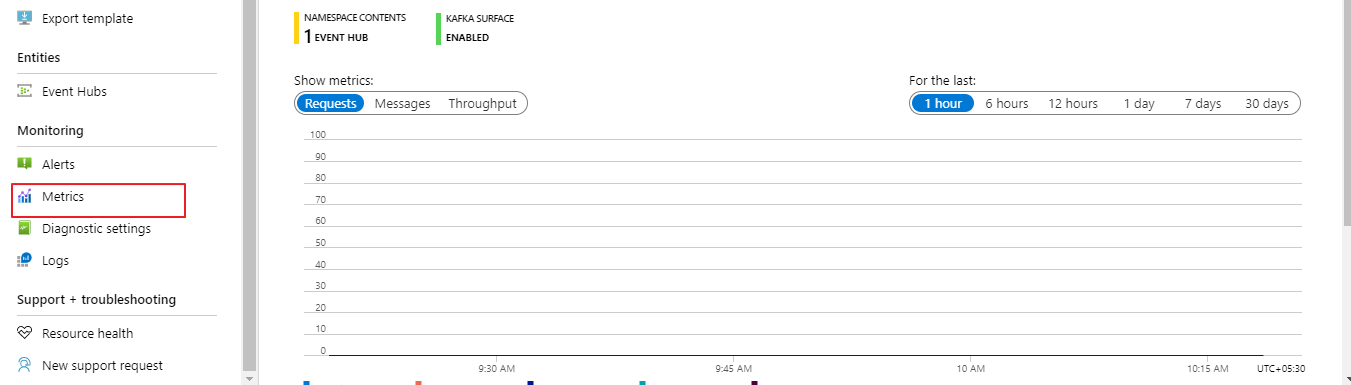

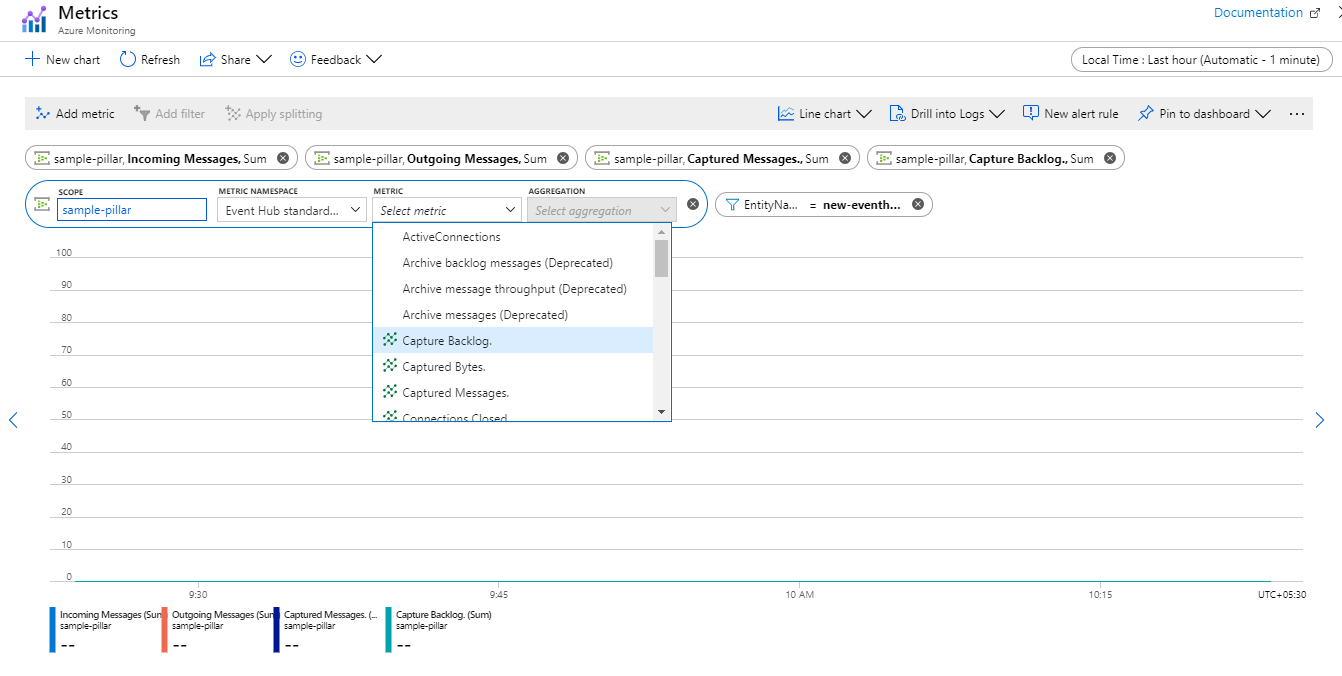

How to access the metrics?

The metrics can be accessed either through the Azure portal or using APIs and Log Analytics. Metrics are enabled by default and most recent data of 30 days can be accessed or to retain data for a longer duration, the metrics data can be archived to an Azure Storage account.

Metrics can be monitored straight from the Azure portal directly from the namespace level just by clicking on the metrics option under monitoring section available in the left blade.

To bring in the required metrics for monitoring, specify the desired metric namespace and select from the metrics filtered to the scope of that event hub.

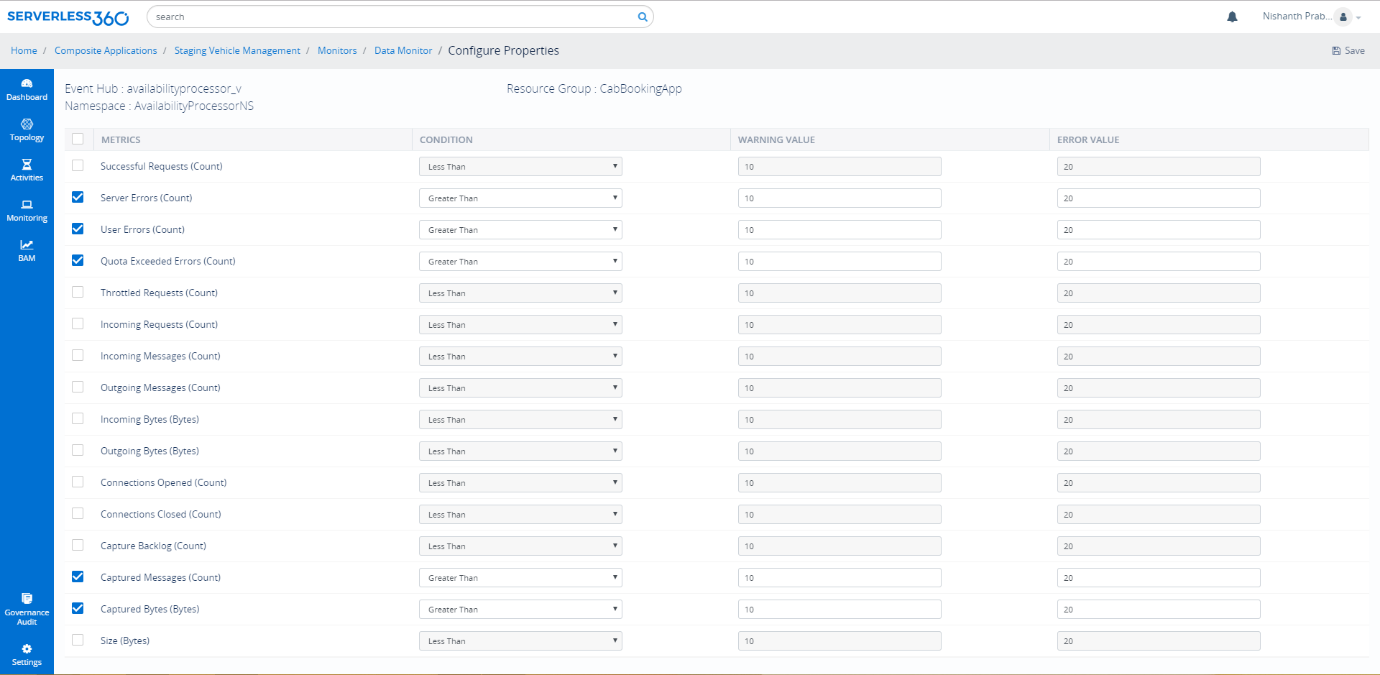

Metrics

The metrics are assigned based on various perspectives to attain a complete monitoring. The metrics are,

- Request Metrics

- Throughput Metrics

- Message Metrics

- Connection Metrics

- Event Hubs Capture Metrics

- Metrics dimension

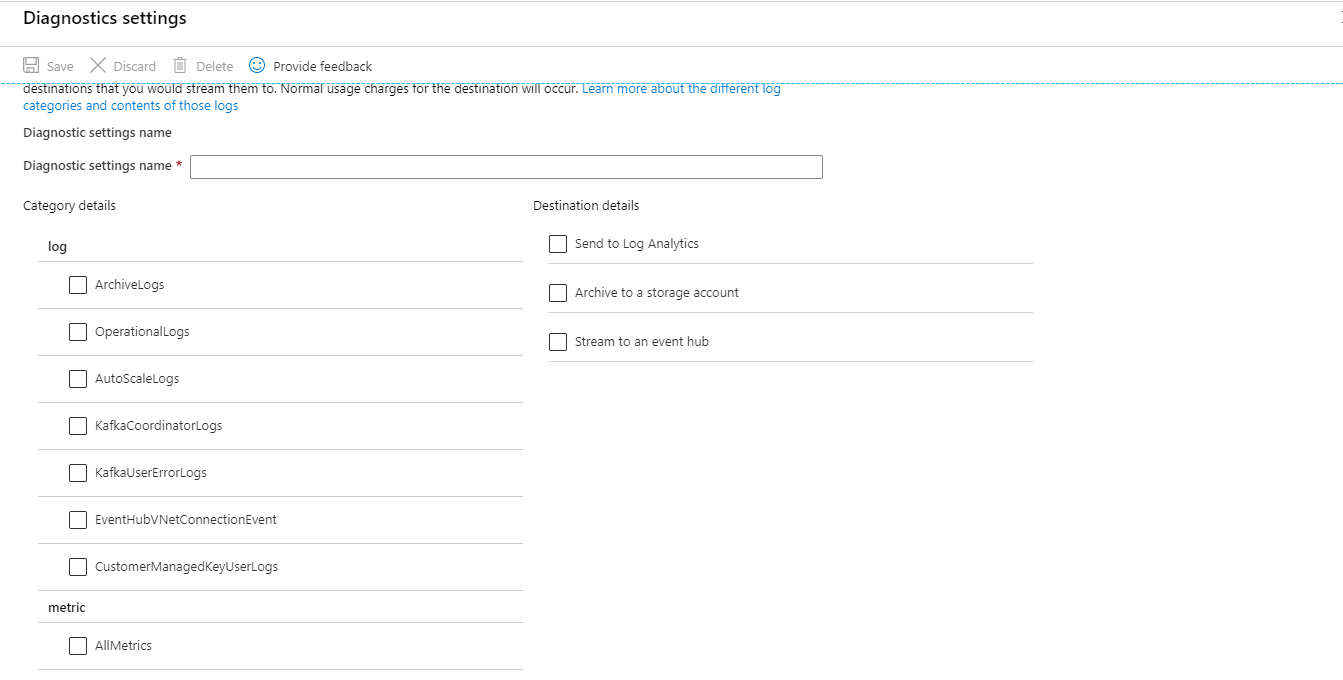

Diagnostic Logs

Diagnostic Logs is configured to have an overall view of very task that is happening. It covers activities that takes place from the time of creation till deletion and even the updates and activities during the run time.

A diagnostic setting specifies a list of categories to perform logs and metrics to be collected from the resource and one or more destinations to stream them. Event Hub enables diagnostic logs for two categories

- Archive Logs: Logs that are associated to Event Hubs archives.

- Operational Logs: Logs that provides information on all the activities (creation, deletion and updating) that takes place in the event hub.

Manage Azure Event Hub in Turbo360

Managing the activities in a service like Azure Event Hub, which deals with millions of events requires a tool with outstanding management and monitoring capabilities. Turbo360 comes into picture to fulfil all the complex management needs required by the Azure services. To know more about Turbo360 Sign up for 15 day free trial..

The Vehicle Management scenario exhibits how powerful the Event Hubs are, but the real challenge is to manage it in Azure portal. There are certain challenges faced by Azure users to manage and monitor the event hubs in the Azure portal.

- Lack of deeper/integrated tooling

- No consolidated monitoring

- No dead-letter event processing in Event Grid

- No Auditing

To overcome all these challenges Turbo360 has come up with extensive set of capabilities.

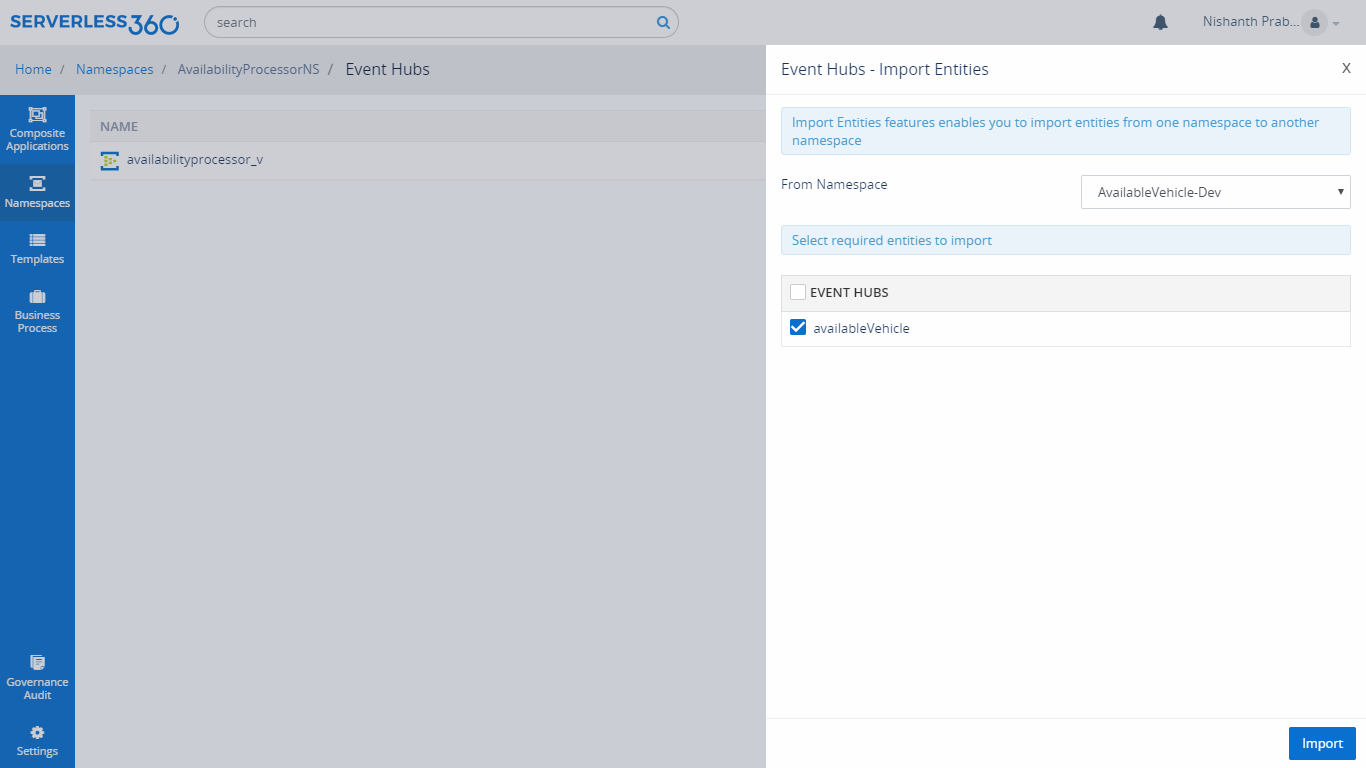

Integrated Tooling

In certain business application there might be a requirement like importing an Azure Event Hub from Non-production Namespace to Production Namespace. There is no straight-forward solution in Azure portal to achieve this.Turbo360 solves this with the help of import entities option from the namespaces tab available in the Turbo360 portal. With this capability, the user can import their Event Hub entities from Non-production to Production Namespace in a single click.

It is also possible to manage the shared access policy for Azure Event Hubs, view the properties of the Azure Event Hubs and Event Grids and create consumer group for Event Hubs.

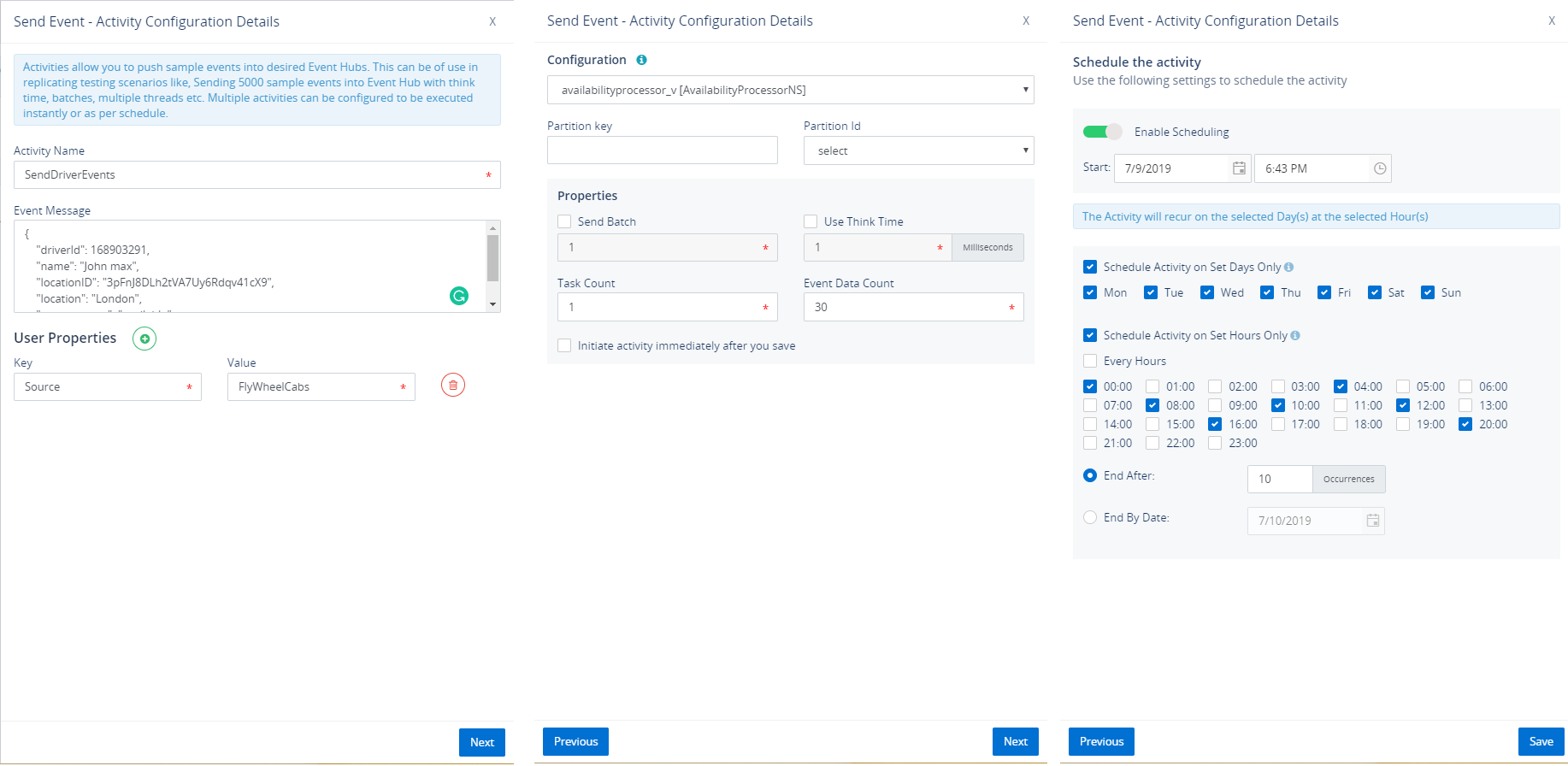

Activities

When there is a need to test our business orchestration, we need to send some events to Azure Event Hubs so that event will send to the Endpoint through the subscription. But in the Azure portal, it is not possible to test the orchestration. To solve this challenge, Turbo360 brought in the capabilities called Activities with which user will be able to send events to both Event and Event Grid Topic. It is also possible to schedule these Activities too.

Read more on this feature here.

Monitoring Azure Event Hub in Turbo360

Azure provides the entity level monitoring on their metrics, but the actual need would be Consolidated monitoring at the application level.

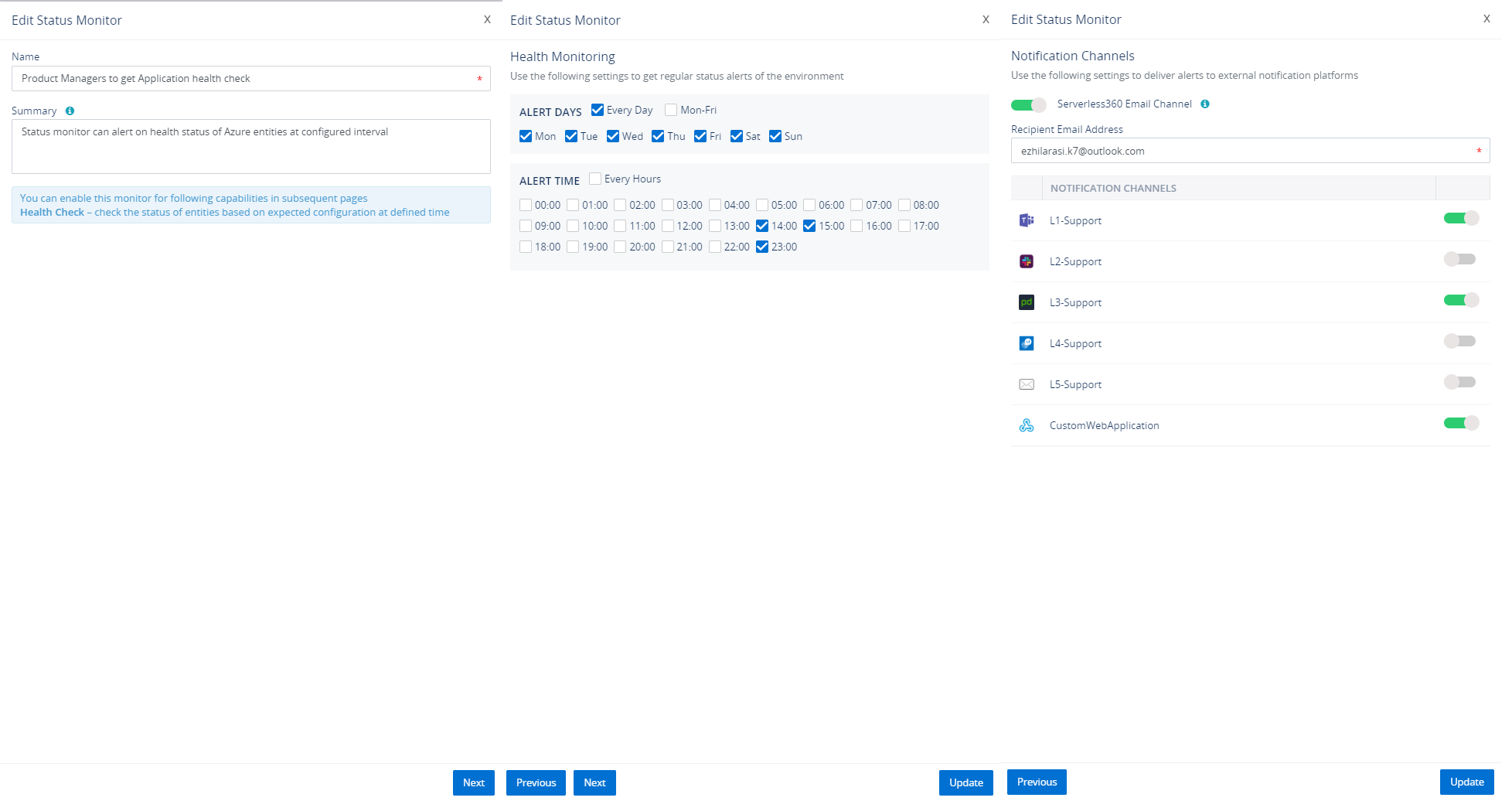

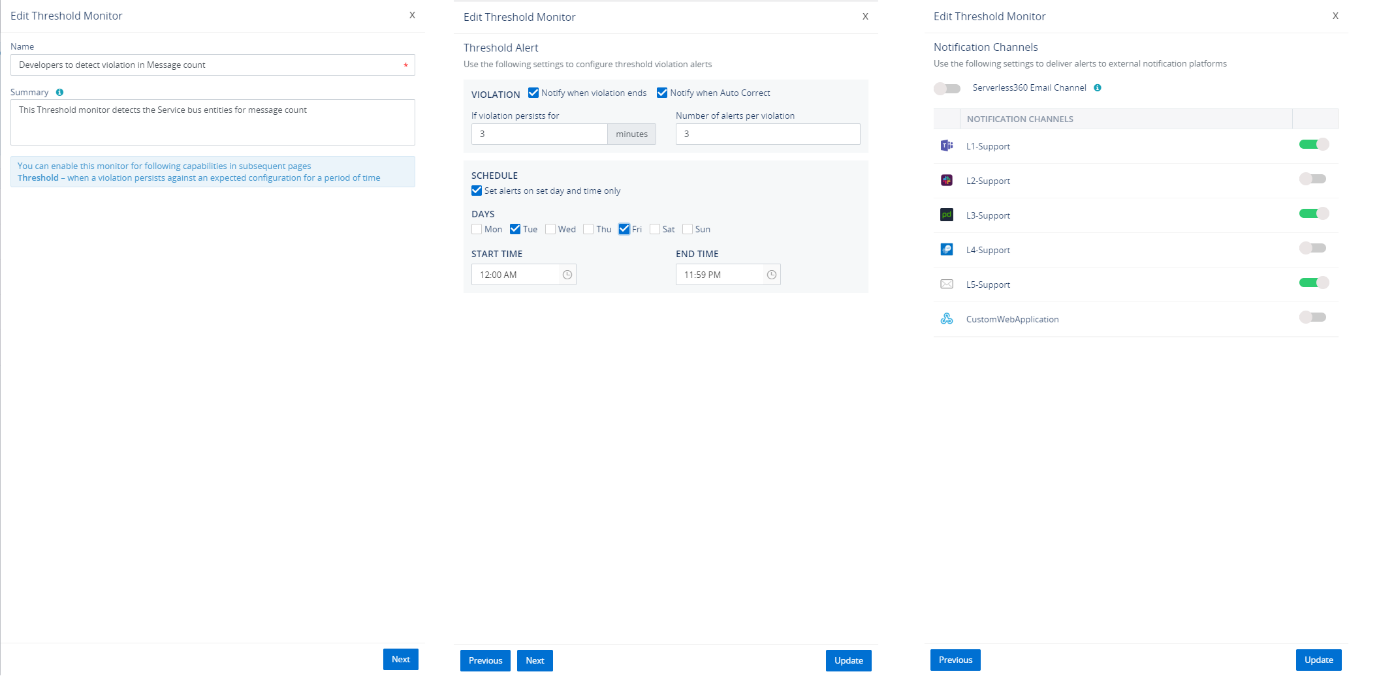

For monitoring Azure Event Hubs in multiple perspectives, Turbo360 has three types of monitors: Status Monitor, Threshold monitor and Data monitor.

Status Monitor

Choose Turbo360 status monitor to get application health reports at a specified time in a day representing the state of Azure Event Hubs against the desired values of its state. It is also possible to monitor the partition of the Azure Event Hubs.

Visit Status Monitor documentation page for more information.

Threshold Monitor

Monitor Azure Event Hubs when their state violates desired values for a specified period, say few seconds/minutes. For Azure Event Hubs, it is possible to monitor the status and size of the partitions in Threshold monitor.

Visit Threshold Monitor documentation page for more information.

Data Monitor

There would be needed to monitor the performance of the Azure Event Hubs, Count of events in the Capture of the Event Hubs. To monitor the entities on their extensive set of metrics, Turbo360 has brought in the capability called data monitor which provides the calendric view of historical alerts.

Visit Data Monitor documentation page for more information.

Turbo360 provides Comprehensive way to manage and monitor your Azure Serverless Components. Check Azure Event Hub monitoring feature page for more insights.

Next Steps:

Use Cases

Event Stream Processing

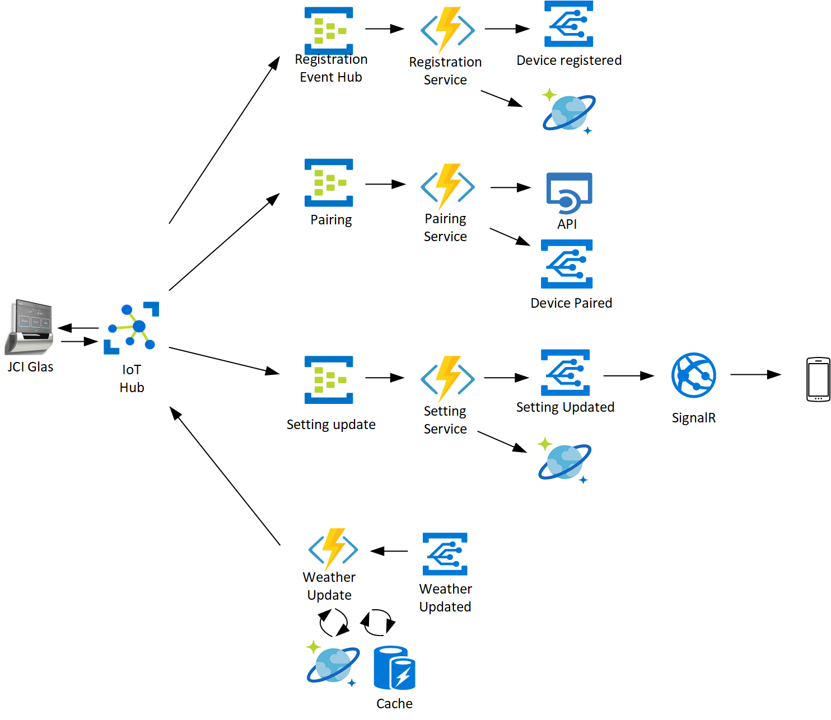

GLAS Smart Thermostat by Johnson Controls, uses Azure Serverless to connect their room thermostat with customer’s handheld devices. The GLAS Smart Thermostat monitors to report the indoor and outdoor air quality so that you can manage the air you breathe. Initially, they were using the VM’s to connect and process the data between the thermostat and the customer’s devices. Considering their vertical growth in the expanding consumer market they made this paradigm shift to Serverless.

The team tested this scenario with a simulation of 20,000 IoT devices besides the real devices

Architecture

In this architecture, you can see four different activities, all controlled by different Azure Serverless components like Azure Event Hubs, Functions, Event Grid, Cosmos DB, and API Management. All the communication between the thermostat, Azure and the customer device is sent through the IoT Hub. As a first step when the customer installs a thermostat, JCI GLAS device sends a message to the IoT Hub to register the device. This event triggers the Functions app to save the data in Cosmos DB. The function app also raises an event into the Event Grid to trigger events like the registration success message to the customer mobile devices.

IMAGE CREDITS-MICROSOFT

The second step is to pair both the JCI GLAS device and the customer mobile device. Again, the IoT hub triggers a pairing service to the Event Grid through the pairing Event Hub and Functions app.

Finally, the user sets the temperature range in which the device should trigger a message, this setting is forwarded into Azure through the IoT Hub. The Event Hub not only sends the settings into the Cosmos DB through Functions but also triggers an event into the Event Grid and integrate with SignalR services to update the user mobile device.

The next service is to send a message from Azure into the GLAS device, this message is normally about the local weather update from the internet. In this case, the weather update is raised as an event into the Event Grid and triggers the Azure Functions to check the Cache to check if the settings are up to date and sends the message to the GLAS device through the IoT Hub.

Here you can see that all the messages are going through 1 IoT Hub to 3 Event Hubs based on the message type. There are cases where the message from IoT, directly triggering the Azure Functions and the message is segregated through the if/else coding. But given the routing mechanism and throttling in Event Hubs, it makes sense to use Event Hubs.

Documentation and Webinars

This Webinar covers in detail the capabilities of Turbo360 to facilitate managing and monitoring Azure Event Hubs. All concepts are dealt with real-world business use cases for better understanding.

This content will be continuously revamped to stay up to date.

Turbo360 helps to streamline Azure monitoring, distributed tracing, documentation and optimize cost.

Sign up NowFrequently Asked Questions

-

How does Azure Event Hub work?

Event Hubs represents the “front door” for an event pipeline, often called an event ingestor in solution architectures. A component or service that lies between event publishers and event consumers to decouple the production of an event stream from its consumption is known as an event ingestor. Event Hubs is a unified streaming infrastructure with a temporal retention buffer that allows event producers and consumers to be separated.

-

What is Event Hub schema registry?

Event Hubs contains the Azure Schema Registry, which serves as a central repository for schema documents for event-driven and messaging-centric applications. It allows your producer and consumer applications to communicate data without having to worry about managing and sharing the schema. The Schema Registry also provides a simple governance framework for reusable schemas, as well as a grouping construct that defines the relationship between schemas (schema groups).

-

What is the maximum allowed message size in basic/standard tier in Event Hub?

The maximum message size allowed for Event Hubs is 1 MB

-

How do I receive events on Event Hub?

We need to write a ParitionReceiver to receive events. An event hub can have multiple partitions, each of which serves as a queue for storing event data. The EventHub decides which partition it wishes to send freshly incoming data to on its own. The messages are distributed in a round robin fashion.

-

Which method is used to send events to Event Hub?

Create an EventHubClient instance and transmit it asynchronously via the SendAsync function to deliver events to an event hub. This method takes a single EventData instance parameter and sends it to an event hub asynchronously.

-

What are the different protocols supported for sending events to Event Hub?

Azure Event Hubs supports three protocols for consumers and producers: AMQP, Kafka, and HTTPS

-

Can a sender send an event to particular partition of Event Hub?

Yes with the help of SendAsync(EventData) send EventData to a specific EventHub partition. The target partition is pre-determined when this PartitionSender was created. This send pattern emphasizes data correlation over general availability and latency.