Azure Event Grid was the missing piece for messaging in the cloud. Instead of extensive polling or ‘hammer-polling,’ a very resource intensive process, you now get ‘tap on the shoulder’ – here’s an event ‘an image is available for processing.’ Event Grid is a service in Azure that enables central management of events. It provides intelligent routing with filters and standardizes on an event schema.

Event Grid

With Event Grid, offers a mechanism for reactive event handling. An application can subscribe to events and handle them accordingly – for instance, handle events like storage blob events, provisioning notifications in an Azure subscription, IoT device signals, or even custom event.

Event Grid handles events, not messages. A message has an intent and requires an action – for instance, an order that needs to be fulfilled. An event is a fact, something that has happened and you can react to it or not. Hence there is a clear distinction between messaging and events. Thus, there are services for both available in the Azure Platform – Clemens Vasters explains this in the blog post from last year: Events, Data Points, and Messages – Choosing the right Azure messaging service for your data.

Free download this blog as a PDF document for offline read.

Pub-Sub Model

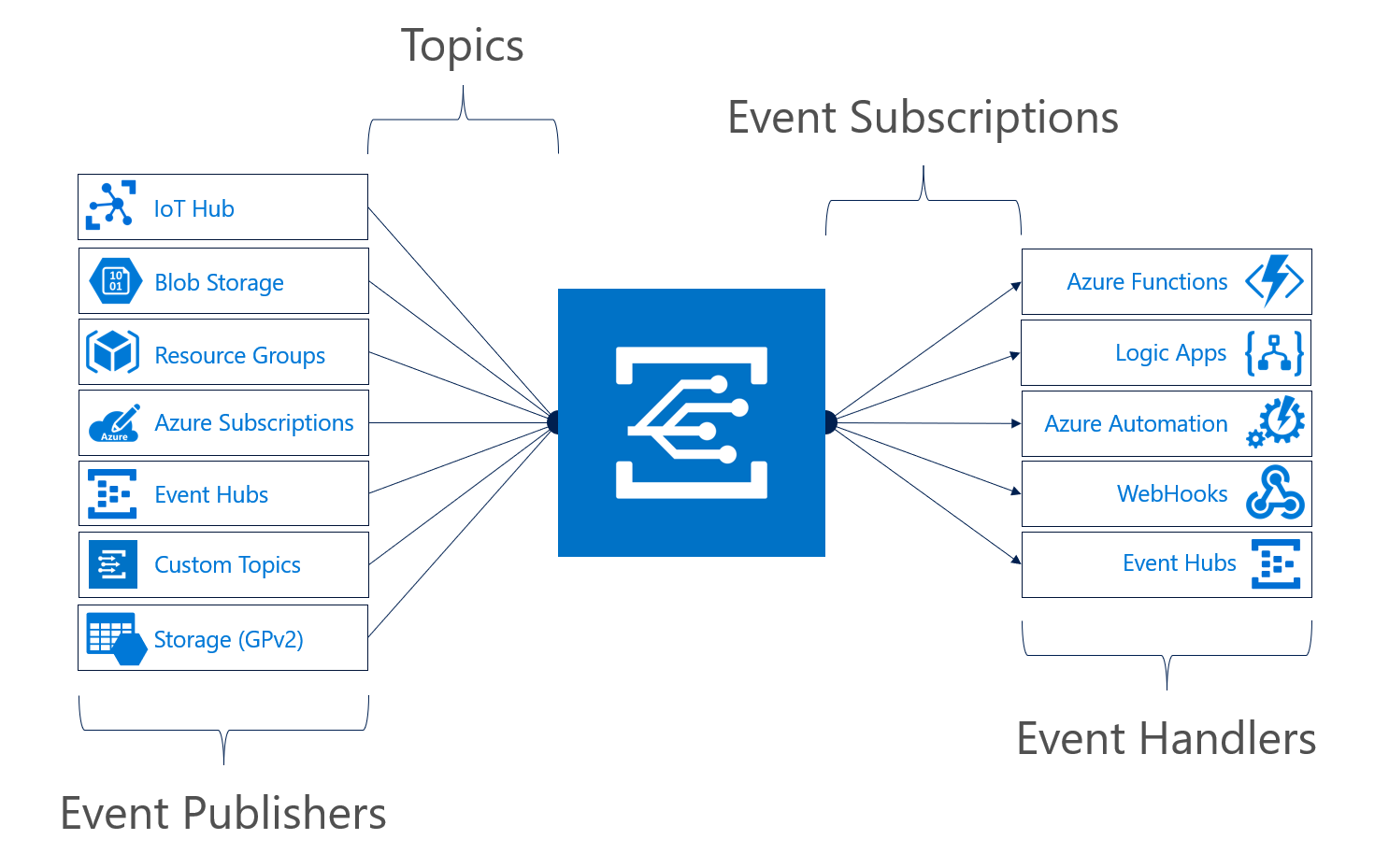

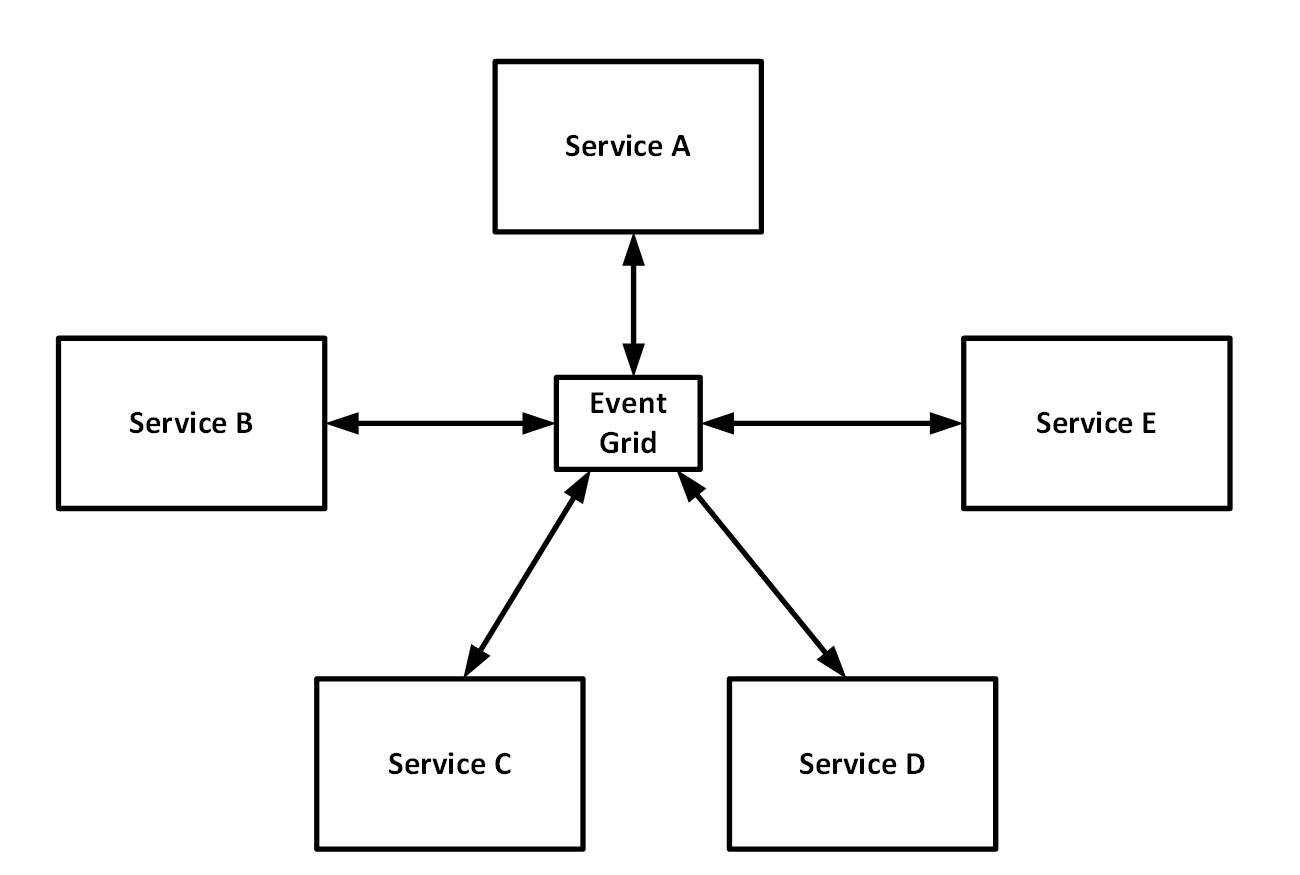

The concept of Azure Event Grid is simple – it is a pub-sub model. An event source pushes events to Azure Event Grid, and event handlers subscribe to events. An event source or publisher – an Azure service like Storage, IoT Hub, or a third party source emits an event, for instance, blobCreated or blobDeleted. You can send the event to a topic. Each topic can have one or multiple subscribers (event handlers). You can configure an Azure Service, if supported, as an event publisher or you create a custom Azure Event Grid Topic. Subsequently, the event handlers in Azure like Functions, WebHook, and Event Hubs can react to the events and process them.

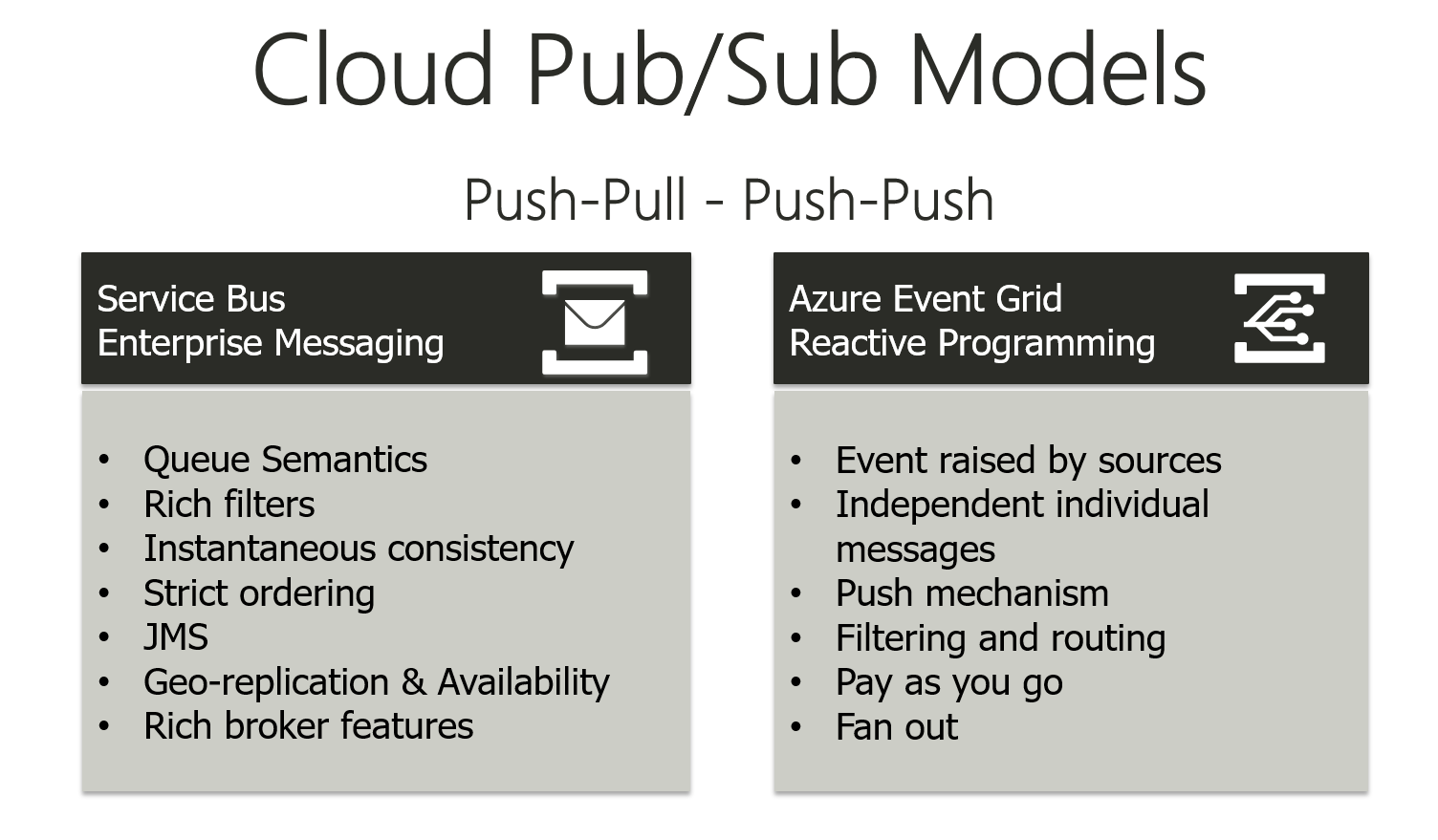

The pub-sub model of Azure Event Grid is not similar to service bus Topics and subscriptions. Moreover, Topics and subscription are push-pull, while Azure Event Grid is push-push. Furthermore, there are other differences too, as shown in the picture below.

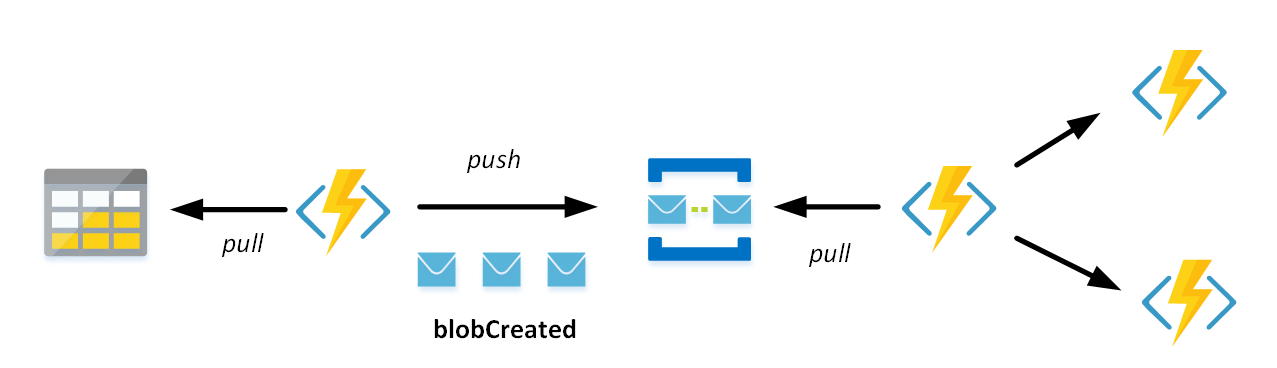

Consider a function listening to a blob storage container and each time a new blob is created it will send a message to a message queue. Subsequently, you can listen to the queue with another function, process the blob with the function and call two other functions (or place a queue between that function and the other two functions). The diagram below shows how pull-push would work using a Service Bus Queue.

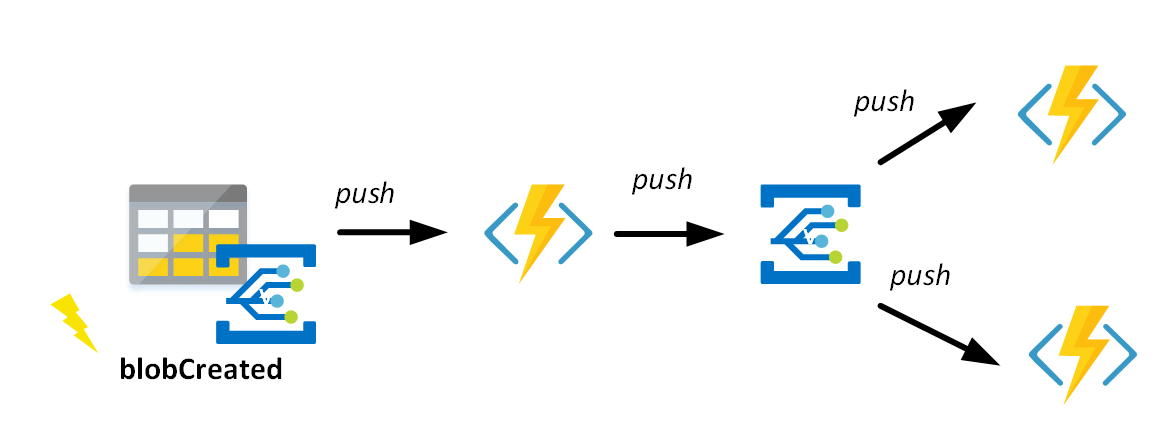

With Event Grid, you can have a different approach as shown in the diagram below.

You can view Event Grid as the backbone of event-driven computing in Microsoft Azure – and it brings a few benefits to the enterprise. With the push-push pub-sub model you can be more efficient with resources. Event Grid delivers besides efficiency:

- Native integrations (point and click) in a serverless way;

- Fan out to multiple places;

- Reliable delivery of events within 24 hours;

- Intelligent routing of events with types and filters.

These benefits will become apparent in this blog post.

Reversal of dependencies

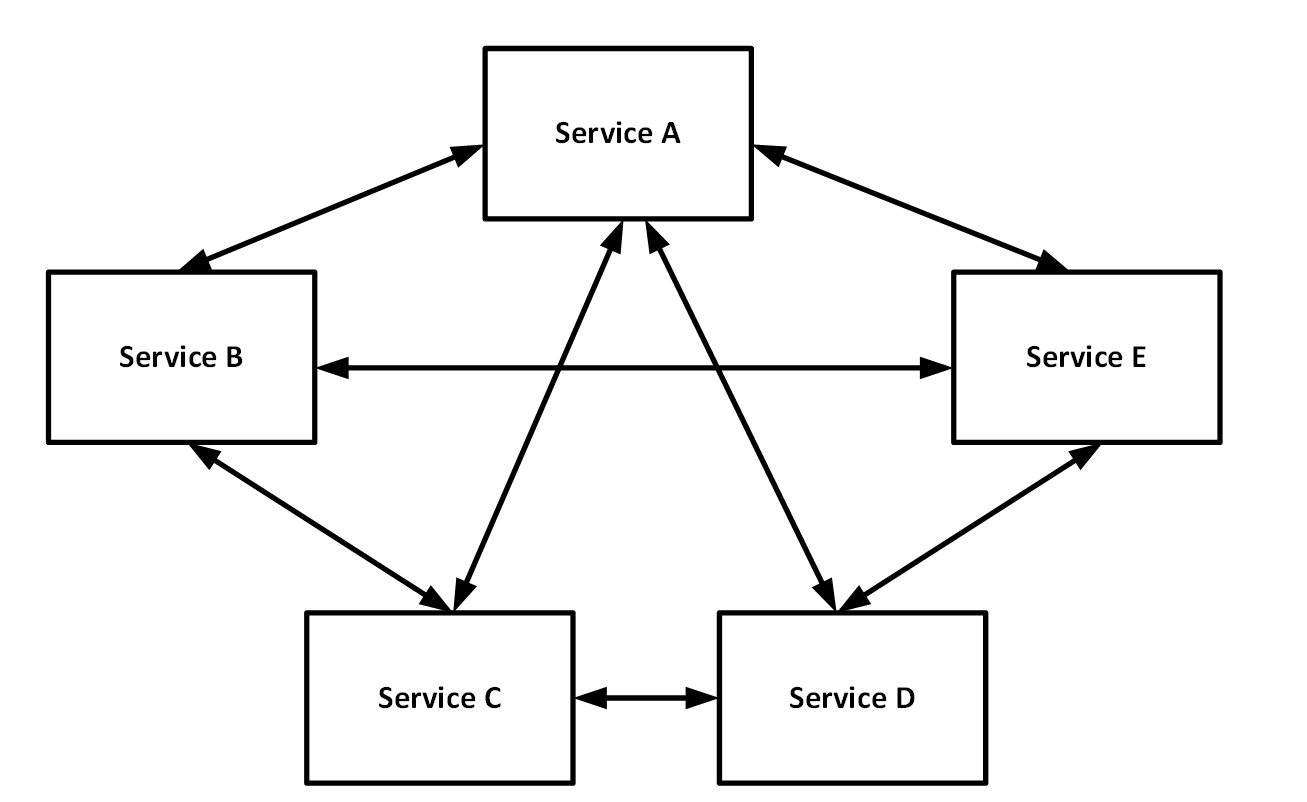

With Event Grid in Azure, you can use as a developer can or adopt reverse dependencies in an existing cloud solution – where services are supporting business processes by depending on each other. In this type of solution, each service has some logic to communicate with others across the architecture. Moreover, the solution or architecture becomes complex or spaghetti of services.

However, with leveraging Event Grid, you can loosen these dependencies by making the services more event-driven. The services communicate by pushing events to a central place, i.e., Event Grid.

By pushing events to a central place you fundamentally unify the communication between services. Tom Kerhove discusses this in his blog Exploring Event Grid.

Toll booth Scenario

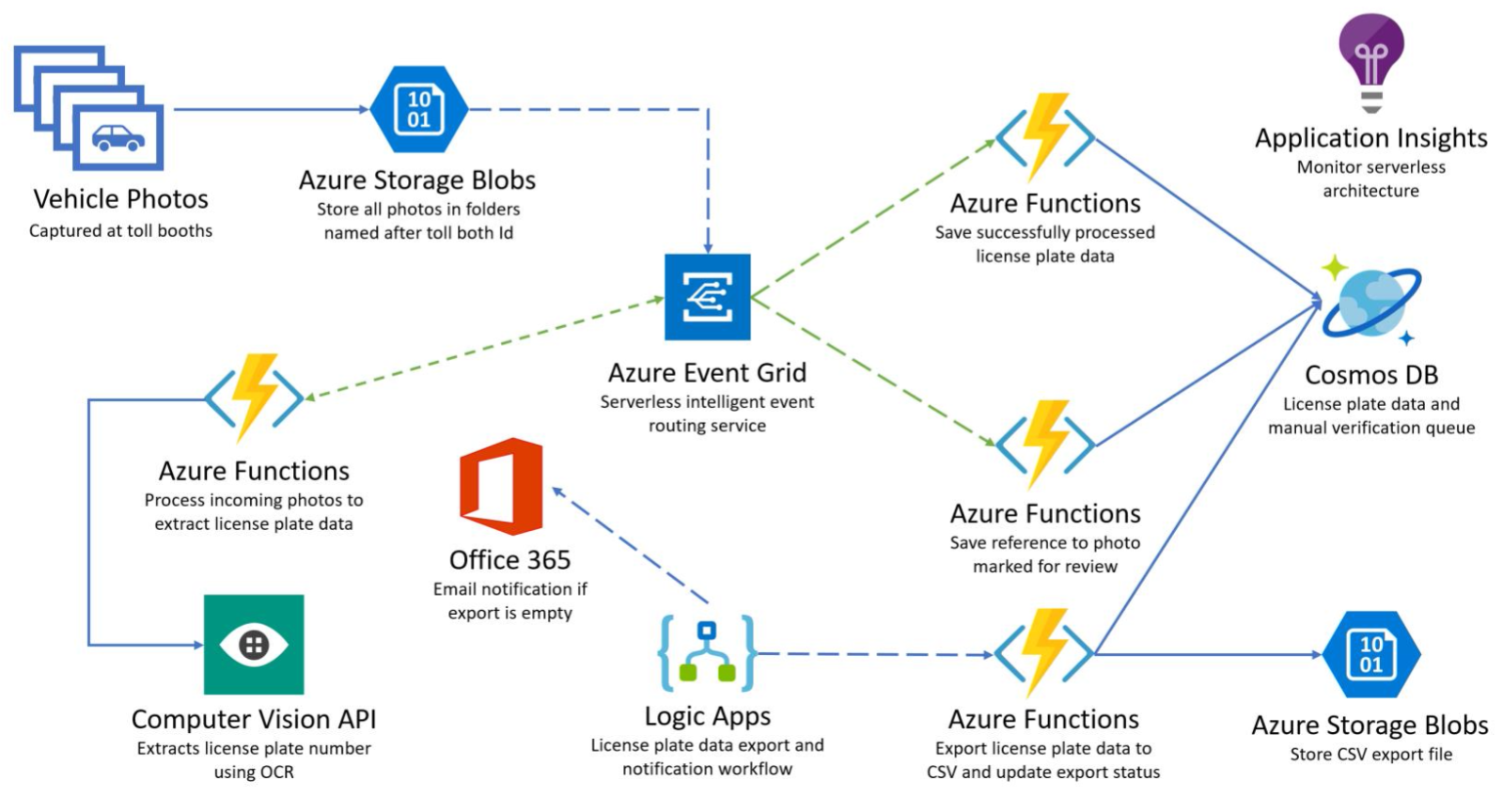

A typical use-case or sample of Event Grid is processing an image leveraging the Vision API. Azure Blob Storage emits an event blobCreated, which is subsequently handled by a function or Logic App subscribing to that event. In the following scenario, the event grid plays a crucial role in handling blobCreated events where several functions subscribe to that event.

In the above scenario, a camera registers every car going through a toll booth. The picture of the vehicle includes a license plate. These images are uploaded in batches to an Azure Storage account – configured as event publisher. Every image is stored as a blob and thus results in an event being emitted to the Event Grid topic. Subsequently, several functions subscribe to the events:

- A function to push the blob (image) to a Computer Vision API – extracting license plate number through Optical Character Recognition (OCR) feature. The function will push the result as an event to another Event Grid Topic.

- A Function to save the license plate (the result of the Vision API) to Cosmos DB.

- A Function to save a reference of the image in the storage account to Cosmos DB.

The above scenario shows how a developer can build several functions to handle the events. Moreover, the developer is a part of making reactive components, i.e., functions (tasks) in this case. The process of managing events (car passing a toll booth) is through a loosely coupled, scalable, and serverless type of solution. In the following paragraphs, we will further discuss some of the critical concepts of Event Grid with the given scenario.

Azure Event Grid Schema

The events in Event Grid adhere to a predefined schema – a JSON schema with several properties. Furthermore, events are sent as a JSON array to Event Grid with a maximum size of 1 MB – each event in the array is limited to 64 KB. In case you send an array of event exceeding the limits – you will receive the HTTP response 413 Payload Too Large. Note that even a single event is sent as an array.

The following schema shows the properties of an event:

[

{

"topic": string,

"subject": string,

"id": string,

"eventType": string,

"eventTime": string,

"data":{

object-unique-to-each-publisher

},

"dataVersion": string,

"metadataVersion": string

}

]

For example, the schema published for an Azure Blob storage event in our scenario:

[{

"topic": "/subscriptions/0bf166ac-9aa8-4597-bb2a-a845afe01415/resourceGroups/RG_LicensePlates_Demo/providers/Microsoft.Storage/storageAccounts/licenseplatesstorage",

"subject": "/blobServices/default/containers/images/blobs/LicensePlate1.png",

"eventType": "Microsoft.Storage.BlobCreated",

"eventTime": "2018-06-05T08:35:26.2778593Z",

"id": "2c6f7c22-201e-00b7-0aa8-fca7ce06e319",

"data": {

"api": "PutBlob",

"clientRequestId": "32a51839-6bf5-44d6-acfe-7d284229988c",

"requestId": "2c6f7c22-201e-00b7-0aa8-fca7ce000000",

"eTag": "0x8D5CABF497519C6",

"contentType": "application/octet-stream",

"contentLength": 41860,

"blobType": "BlockBlob",

"url": "https://licenseplatesstorage.blob.core.windows.net/images/LicensePlate1.png",

"sequencer": "000000000000000000000000000008BC0000000003fd850f",

"storageDiagnostics": {

"batchId": "fbb4e831-82b2-4e64-b0e5-0735449f9cb7"

}

},

"dataVersion": "",

"metadataVersion": "1"

}]

The image processing function in our scenario can handle the event and use the URL in the data payload to download the image from the storage account container. The Rest Call pushes the downloaded image to the Computer Vision endpoint. Finally, this function will read the JSON result and push to another Event Grid topic as an event.

Security

The security of Event Grid consists of several parts. The first part is the Role-based Access Control (RBAC) on the Event Grid resource – where the person creating a new subscription needs to have the Microsoft.EventGrid/EventSubscriptions/Write permissions. Second, there is a validation on WebHooks – the first and foremost mechanism for listening to events – every newly registered WebHook needs to be validated by Event Grid first. This is what is implicitly happening when creating subscriptions to events in an event resource like Storage Account V2 – you can hook events to a Function or Logic App using the events feature in the storage account. In our scenario, the arrangement of subscriptions to events is implicit.

In case you want to subscribe to events using custom code, i.e., the on-premise client you will need to respond to the validation token the Event Grid will send out. The validation request Event Grid will send to the client will look like below:

[{

"id": "2d1781af-3a4c-4d7c-bd0c-e34b19da4e66",

"topic": "/subscriptions/xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx",

"subject": "",

"data": {

"validationCode": "512d38b6-c7b8-40c8-89fe-f46f9e9622b6"

},

"eventType": "Microsoft.EventGrid.SubscriptionValidationEvent",

"eventTime": "2018-01-25T22:12:19.4556811Z",

"metadataVersion": "1",

"dataVersion": "1"

}]

The client will respond with the validation code in the response with a 200-OK. If you want to send custom events, use SAS tokens or key authentication to publish an event to a topic. For more details on security and authentication of Event Grid, Refer to the official documentation here.

Guaranteed delivery of events

A key aspect of the scenario is the guaranteed delivery of events. The default behavior of Event Grid is that it will try at least once to deliver the event and retry using exponential backoff – Event Grid will keep on sending events to the Event Handler until it acknowledges the request with either an HTTP 200 OK or HTTP 202 Accepted. The back-off mechanism will use progressively longer waits between retries for following error responses. Furthermore, it will last until 24 hours have passed – after 24 hours the Event Grid will delete the event. Refer the official documentation here.

Fan-in fan-out Scenario

You can view Event Grid as serverless that is you do not have to manage any infrastructure, you pay per operation, and Microsoft handles the scaling for you. Event Grid leverages Service Fabric under the hood and thus scales when the workload increases.

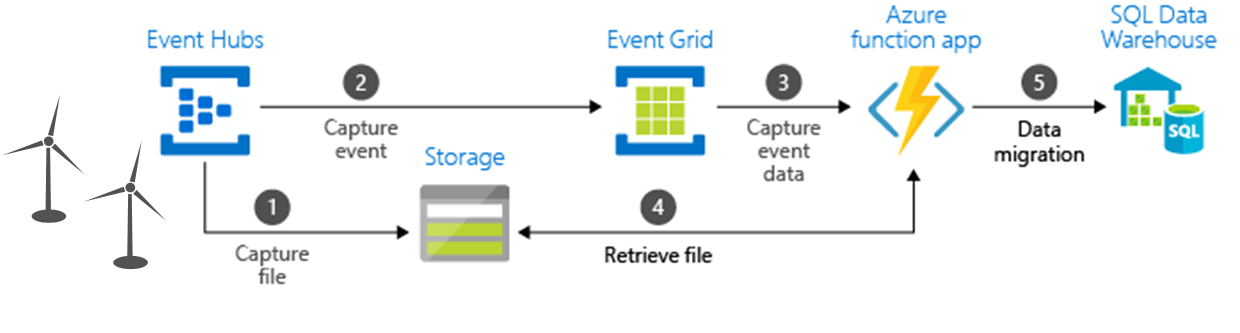

Event Hubs and Event Grid together work seamlessly in ingestion (fan-in) and push (fan-out) scenarios. Both scale easily when workloads increases and do not require you manage infrastructure. In the following situation, the capture feature will send the wind farm data (per turbine) to the event hub.

The Event Hubs Capture pulls the data from the stream of windfarm data and generates storage blobs with the data in Avro format. Subsequently, when Capture creates the blob (1), the blobCreated event is generated and emitted to Event Grid (2). Subscribers to the event will receive it and handle it accordingly, in this case, an Azure Function (3) will get the event. Furthermore, the function will retrieve the file (URL to the Avro file) from the blob storage (4) and store it into a SQL Data Warehouse (5). For more, refer to the official documentation here:

Wrap up

This blog discusses Azure Event Grid scenarios where Event Grid plays a central role, and how developers can leverage this service. Another scenario showed how Event Grid could collaborate with Event Hubs in high volume ingestion and migration of event data.

Event Grid is globally available in several Azure regions of the U.S., Europe, and Asia, with more to come soon. For pricing of Event Grid, details see the pricing page. Furthermore, the full documentation on the Azure Event Grid service is available on the Microsoft Documentation site.