Azure Function Overview

This Pillar Page focuses on core concepts of Azure Function and how it can be better managed and monitored using Turbo360. For the better understanding of Azure Function Apps, let us consider a real-world business scenario performing Order Processing. Before getting into the Business scenario, let’s get the basics right about Azure Functions and its characteristics.

What is Azure Function?

Azure Function is a serverless compute service that enables user to run event-triggered code without having to provision or manage infrastructure. Being as a trigger-based service, it runs a script or piece of code in response to a variety of events.

Azure Functions can be used to achieve decoupling, high throughput, reusability and shared. Being more reliable, it can also be used for the production environments.

Azure Functions vs Web Jobs

Azure Web Job is a piece of code runs in the Azure App services. It is a Cloud Service used to run background tasks. Azure Functions are built on the top of Azure Web Jobs with the added capabilities. The table below differentiates Azure Functions and Web Jobs:

| Azure Functions | Web Jobs | |

|---|---|---|

| Trigger | Azure Functions can be triggered with any of the configured trigger, but it doesn’t run continuously | Web Jobs is of two types, Triggered Web Jobs and Continuous Web Jobs |

| Supported languages | Azure Functions support various languages like C#, F#, JavaScript, node.js and more. | Web Jobs also support various languages like C#, F#, JavaScript and more. |

| Deployment | Azure Functions is a separate App Service that run in the App Service Plan | Web Jobs run as a background service for the App services like Web App, API Apps and mobile Apps |

Azure Functions Vs Logic Apps

An Azure function is a code triggered by an event whereas an Azure Logic app is a workflow triggered by an event.

Azure Logic App can define a workflow at ease consuming a range of APIs as connectors. These connectors will perform series of actions defined in the workflow. Like Azure Logic Apps, durable Azure Functions can also be used to define workflow in code structure.

| Azure Functions | Azure Logic Apps | |

|---|---|---|

| Trigger | Azure Functions can be triggered with the configured trigger like HTTPTrigger, TimerTrigger, QueueTrigger and more | Azure Logic Apps can be triggered with the API as connectors. It can also have multiple triggers in a workflow. |

| Defining Workflow | Workflow in Azure Functions can be defined using Azure Durable Function. It consists of Orchestrator Function that has the workflow defined with Several Activity Functions | Workflow on Azure Logic Apps can be defined with Logic App designer using various APIs as Connector. |

| Monitoring | Azure Functions can be monitored using Application Insights and Azure Monitor | Azure Logic Apps can be monitored using Log Analytics and Azure Monitor |

| Turbo360 can monitor both Azure Function Apps and Logic Apps |

Below is the detailed comparison on Azure Functions vs Logic Apps:

Next steps:

- When to use Azure Logic Apps and Azure Functions with some real-time comparison by Steef-Jan Wiggers

Quick Starts

Azure Functions

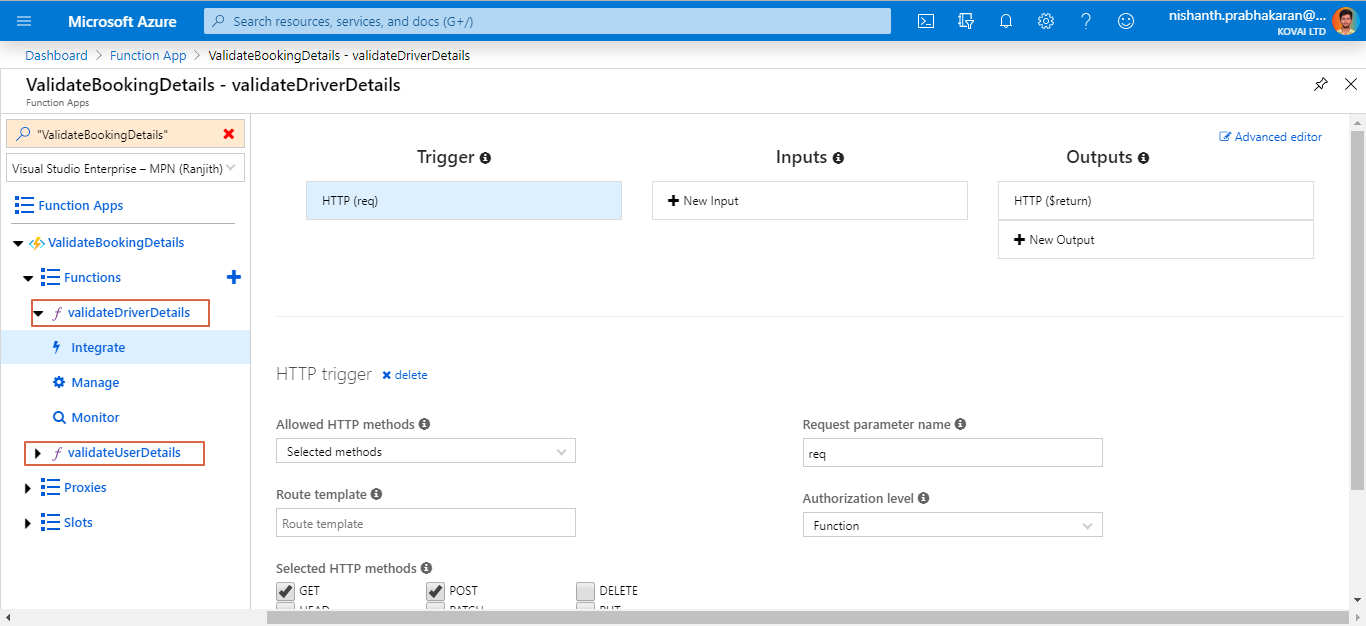

Azure Functions are the individual functions created in a Function App. Every Function can be invoked using the configured trigger. Azure portal provides the capabilities to create, manage, monitor and integrate inputs & outputs of the Azure Functions.

An Azure function can also be tested by providing some raw inputs. When it comes to monitoring the Functions, portal offers solutions like Application insights for live status monitoring through the invocation logs. Functions in the Function App can be monitored based on the app metrics.

Runtime Versions

Azure Functions runtimes versions are related to the major version of .NET. Below are the Azure Functions runtime version with equivalent .NET version:

| Runtime Version | Availability | .NET Version |

|---|---|---|

| 3.x | Preview | .NET Core 3.x |

| 2.x | GA | .NET Core 2.x |

| 1.x | GA | .NET Framework 4.6 |

GA – Generally Available

Azure Function 2.x is Generally available and 1.x is in the maintenance mode. Migrating from 1.x to later version is simple and straight forward which can be done from the Azure portal itself.

How Long Can Azure Functions Run?

For any Azure Functions, a single Function execution has a maximum of 5 minutes by default to execute. If the Function is running longer than the maximum timeout, then the Azure Functions runtime can end the process at any point after the maximum timeout has been reached.

What Languages Does Azure Functions Support?

Azure Function supports multiple languages and it is based on the Runtime versions.

Functions 1.x:

Azure Functions 1.x supports C#, F#, JavaScript. But Functions 1.x is only in maintenance mode.

Functions 2.x:

Azure Functions 2.x supports C# (.NET Core 2.2), JavaScript (Node 8 & 10), F# (.NET Core 2.2), Java 8, PowerShell Core 6, Python 3.7.x, Typescript.

Functions 3.x:

Azure Functions 3.x is a preview version that supports C# (.NET Core 3.x), JavaScript (Node 8 & 10), F# (.NET Core 3.x), Java (Java 8), PowerShell Core 6, Python 3.7.x, TypeScript (Preview).

How Do You Call Azure Function?

Azure Functions can be called when triggered by the events from other services. Being event driven, the application platform has capabilities to implement code triggered by events occurring in any third-party service or on-premise system.

Azure Functions provides templates to get you started with key scenarios, including the following:

- HTTPTrigger-Trigger the execution of your code by using an HTTP request.

- TimerTrigger– Execute clean up or other batch tasks on a predefined schedule.

- CosmosDBTrigger-Process Azure Cosmos DB documents when they are added or updated in collections in a NoSQL database.

- BlobTrigger-Process Azure Storage blobs when they are added to containers. You might use this function for image resizing.

- QueueTrigger-Respond to messages as they arrive in an Azure Storage queue.

- EventGridTrigger-Respond to events delivered to a subscription in Azure Event Grid. Supports a subscription-based model for receiving events, which includes filtering. A good solution for building event-based architectures.

- EventHubTrigger-Respond to events delivered to an Azure Event Hub. Particularly useful in application instrumentation, user experience or workflow processing, and internet-of-things (IoT) scenarios.

- ServiceBusQueueTrigger-Connect your code to other Azure services or on-premises services by listening to message queues.

- ServiceBusTopicTrigger-Connect your code to other Azure services or on-premises services by subscribing to topics.

Proxies

With Azure Function Proxies, user can have a >unified API endpoint for all the Azure Functions consumed by external resources. It is simple to create a proxy URL by only defining the backend URL and HTTP Method. It is also possible to modify the requests and responses of the Proxy. The advanced configurations are also available.

Below is a good read on Azure Function Proxies:

Next steps:

Slots

With Slots, Azure Functions can run in different instances. The Different environment of Functions can be exposed publicly by the API provided by the slots. When the need is to maintain the Functions by different environment like Production, Development and Staging, Slots will be the choice. An instance will be mapped with the Production environment and It can be swapped on Demand. Swapping can be done either through the portal or Azure CLI.

Concepts

How Do I Make an Azure Function App?

Azure Functions can be built in two ways either through Azure portal or using Visual Studio. Below are the step by step process to create and deploy Azure Functions.

Create Azure Functions in Azure Portal:

- Click on Create a resource, select on Azure Functions App in Compute section and Click Create

- Provide the necessary details and create it

To Create Azure Functions in Visual Studio:

- Select File -> New Project. Select Azure Function project.

- Provide the necessary details like Name, path, Function App version and Trigger option. Click Create

After creating the Azure Functions in Visual studio, publishing it will be even more easy.

To Publish the Azure Functions from Visual studio:

- Right Click on the Project Name, Select Publish .

- Provide the details like Resource group name, storage account and more. After this process, the profile will be created and ready to publish

- Click Publish. Now the Azure functions can be accessed in read- only mode in the Azure Portal.

Compare 1.x vs 2.x

Azure Functions 1.0 challenges

- Need for additional language support, e.g. Java, Python, PowerShell

- Only able to host on Windows

- No support for development on Mac and Linux

- Assembly probing and binding issues for .NET developers

- Performance issues on a range of scenarios/languages

- Lack of UX guidance to production success

What’s new in Azure Functions 2.0?

- New Functions quick start by selected programming language

- Updated runtime built on .NET Core 2.1 (supported .NET Framework on the previous version)

- Deployment: Run code from a package

- .Net Function loading changes

- Tooling Update: Visual Studio, CLI and VS Code

- Consumption-based SLA

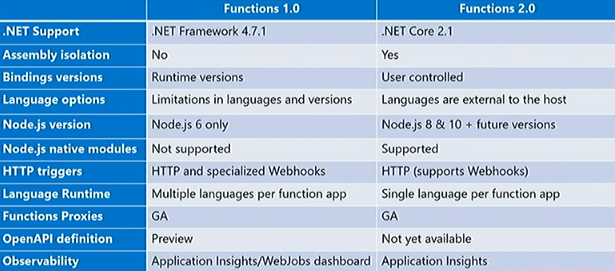

Key differences between Azure Functions 1.0 and 2.0

Some of the highlighted differences between both the versions of Azure Functions are highlighted in the picture,

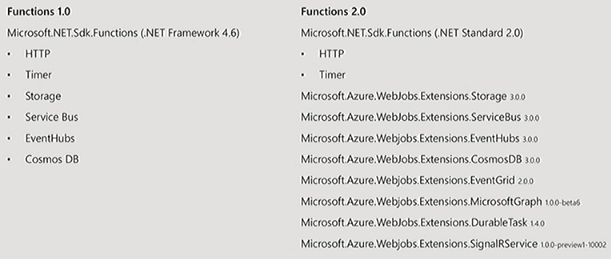

Bindings and Integration

Previously in Azure Functions 1.0, there were a lot of native bindings available, so the runtime had to be changed in order to make changes to them (Bindings), but in Azure Functions 2.0, the runtime will be developed separately (Version independent) to get more clarity on the extensibility model.

For More details, refer the blog on Azure Functions Internals.

Pricing Details

Azure Function App pricing is based on Execution time and Total Executions. It also includes monthly free grant of 1 million requests and 4,00,000 GB-s of resource consumption per month.

- Execution Time – $0.000016/GB-s

- Total Executions – $0.20 per million executions

With Azure Function Premium plan, user can get the enhanced performance. It is billed based on vCPU-s and GB-s.

- vCPU Duration – $0.173 vCPU/hour

- Memory Duration – $0.0123 GB/hour

Next steps:

Understanding Metrics

There are exhaustive set of metrics of the Azure Function Apps which can be monitored to detect the performance, availability and reliability of Azure Functions. Below is the detailed list of metrics and their descriptions:

| Metric Name | Description | Monitoring Perspective |

|---|---|---|

| Average Response Time | The Average time taken for a response from the Function App Unit: Seconds Aggregation: Average Dimension: Entity Name |

Performance |

| Average Memory working set | Average memory consumed by a Function App execution Unit: Bytes Aggregation: Average Dimension: Entity Name |

Performance |

| Function Execution count | Number of Functions executions Unit: Count Aggregation: Total Dimension: Entity Name |

Reliability |

| Thread Count | Number of threads allocated for a function execution Unit: Count Aggregation: Total Dimension: Entity Name |

Performance |

| Http 1xx | Number of Information responses Unit: Count Aggregation: Total Dimension: Entity Name |

Availability |

| Http 2xx | Number of Successful responses Unit: Count Aggregation: Total Dimension: Entity Name |

Availability |

| Http 3xx | Number of Redirection responses Unit: Count Aggregation: Total Dimension: Entity Name |

Availability |

| Http 4xx | Number of Error responses Unit: Count Aggregation: Total Dimension: Entity Name |

Availability |

| Http Server Error | Number of Server Error responses Unit: Count Aggregation: Total Dimension: Entity Name |

Availability |

What Are Azure Functions Used For?

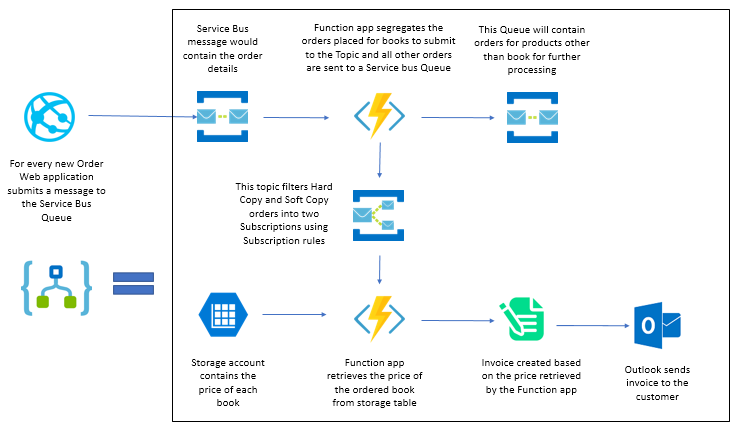

For Better understanding of the Azure Functions, let’s take a simple Order Processing scenario of an E-Commerce application.

Below is the workflow:

- Customer places an order in the eCommerce website

- The website submits a new order message to the Service Bus Queue

- Azure Function filters all the orders for the focused item (Books) to push them to a Topic

- The other orders will be sent to a Service Bus Queue

- The Book order will be further be segregated based on order copy type using Topic Subscription Rules

- The Azure function will retrieve the price of the ordered Book from the storage table and compute the order price

- An invoice will be created based on the computed price returned by the function

- The notification will be sent to the customer with Product details and invoice through Microsoft Outlook

In the Above scenario, there are two Functions used. One validates the product type whether it is a book or other products. And other Function retrieves the price of the book. Both the Functions are used for the validation operation that can be triggered through the Logic Apps.

Where Are Azure Functions Used?

Azure Functions are best suited for smaller apps have events that can work independently of other websites. Some of the common azure functions are sending emails, starting backup, order processing, task scheduling such as database cleanup, sending notifications, messages, and IoT data processing.

Azure Functions Advantages

Being as a cloud service, Azure function has lot of advantages. Some of those advantages are highlighted below:

Pay as you go model- Azure Functions comes in the Pay as you go model. User can pay only for what they use. For Azure functions, cost is based on the Number of Executions per month. Cost structure of Azure Functions are mentioned above in the Pricing Section.

Supports variety of Languages- Azure Function supports major languages like Java, C#, F#, Python and more. Refer above to know more about Azure Functions supported languages.

Easy Integration with Other Azure services – Azure Functions can be easily integrated with the other Azure Services like Azure Service bus, Event Hubs, Event Grids, Notification Hubs and more without any hassle.

Trigger based executions Azure Functions get executed based on the configured triggers. It supports various triggers like HTTP Triggers, Queue Trigger, Event Hub Trigger and more. Being as a trigger-based service, it run on demand. Refer the Trigger section above to know more about the available triggers.

Why Azure Functions Are Serverless?

Functions provides serverless compute for Azure. You can use Functions to build web APIs, respond to database changes, process IoT streams, manage message queues, and more. As Azure Functions can be connected to many other applications running in On-prem or on Cloud, making it a Serverless offering provides better scalability and efficiency.

Azure Durable Functions

Azure Durable Functions is an extension of Azure Function that is used to write Stateful Functions. It consists of Orchestrator function and entity functions that can be defined as a workflow. As a state-based services, it enables checkpoint and restarts from it.

Azure Durable Function supports languages like C#, JavaScript and F#

Orchestrator Client:

The Orchestrator Client is the starting point of the Azure Durable Function. It calls the orchestrator Function which defines the workflow of the orchestration.

Orchestrator Function:

This Orchestrator Function has the workflow of the orchestration. This workflow consists of several pieces of code as Functions named Activity. It may also call other orchestration functions as well. This Orchestrator function is called by an Orchestrator Client.

Activity Function:

Activity Functions are the basic unit of work in a durable function. Each Activity performs an asynchronous task. Activity Function supports all languages that the Azure Function supports. But Orchestration Function can support only C#.

Below are the articles that speaks about Azure Durable Function and its patterns:

Next steps:

Tools

Application Insights

Application Insights is an Application Performance Management (APM) service for monitoring Application performance and latency. With this tool, user can detect anomalies and diagnose issues. It can be used in various platforms like .NET, Node.js, Java EE, python(preview) and more. It has various capabilities like Smart Detection, API Testing, Failure Detection, Application Map, Live Metrics Stream, Analytics and more. It is also having Integration with Alerts, Power BI, Visual Studio, Rest API and Continuous Export.

Azure Monitor

Azure Monitor can be used to monitor the Azure services in various perspectives. It maximizes the availability and performance of the application or service. This Azure Monitor offers several services like Application Insights, Log Analytics, Alerts and Dashboards. It is also having integration with Power BI, Event Hubs, Logic Apps and API.

Metrics Explorer:

With Metrics Explorer, user can detect the performance, latency of the Application or the service in the chart view. This Metric explorer shows the analytics results based on the filters configured on the extensive set of metrics.

Log Analytics:

Log Analytics is tool used to manage Azure Monitor queries. With Log Analytics, user can perform monitoring and diagnostics logging for Azure Logic Apps. User can also query the log for efficient debugging.

Alerts:

With Alerts in Azure Monitor, user can get the alert report when there is a violation. These Alerts are also based on the metrics that was configured while creating it. Single Alert can only monitor the single Entity based on the couple of metrics configured.

Below is the comparison on Turbo360 vs Azure Monitor:

Next steps:

Are Azure Functions Free?

Azure Functions are billed by pay as you go approach. But for the initial start, Azure provides you free bandwidth of 5GB. Above that will be charged as usage. However, Azure Functions can be used with Azure IoT Edge at no charge.

Turbo360

Azure Function Apps can solve huge business challenges but managing and monitoring them in Azure Portal is quite challenging. So Here comes Turbo360 that offers managing and monitoring the Azure resources in Application context. With Turbo360, Azure Functions can be managed, monitored and analyzed in various perspectives.

Turbo360 Vs Application Insights

Though Application Insights have various features for App services, it is not possible to monitor other services like Azure Service bus, Logic Apps etc., It is also not possible to monitor the Azure entities in application context.

Whereas with Turbo360, it is possible to monitor the entities at the context of the business application and user can also get the consolidated monitoring report that reveals the health status of all the entities with the root cause of error. Turbo360 also provides intelligent automations to ensure the production environment in Enabled state.

With BAM in Turbo360, user can perform end-to-end distributed tracing on the Business processes (i.e., workflow of Logic Apps, Azure Durable Functions or Custom Web Application). Find below the detailed analysis on Turbo360 and Application Insights comparison

Next steps:

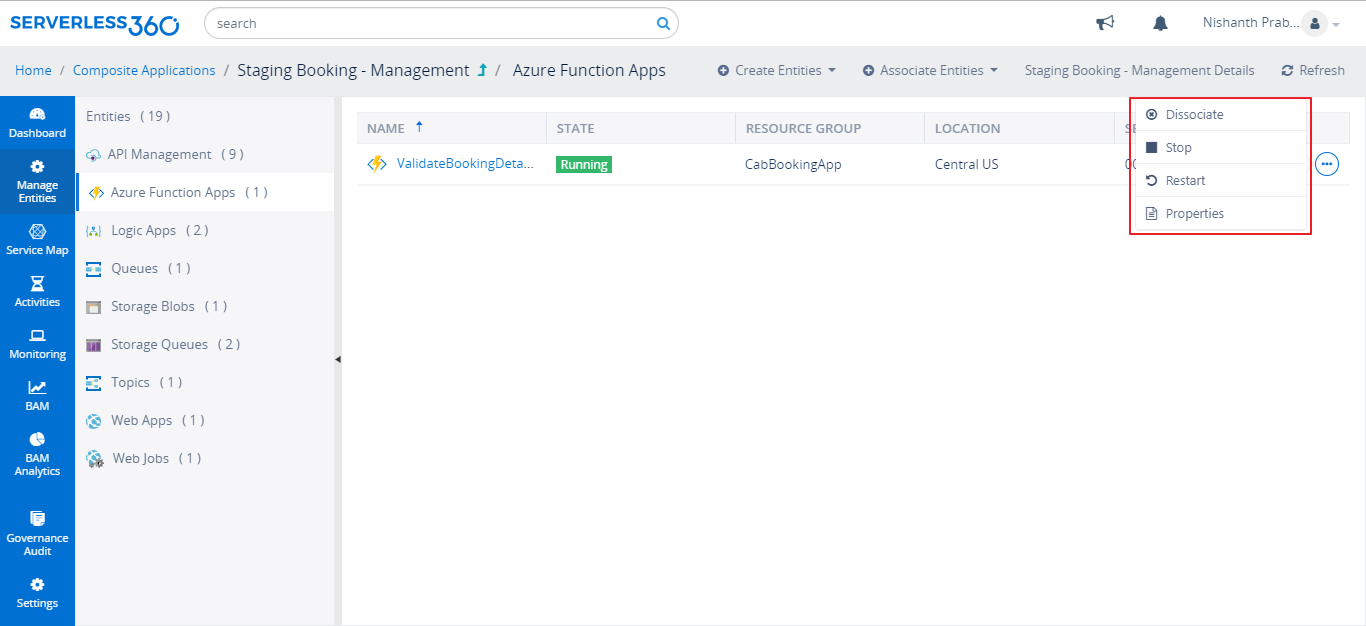

Manage Azure Functions in Turbo360

Managing Azure Functions in Turbo360 is simple and Straight forward. User can straight away associate the Azure Function to the appropriate Composite Application that represents the business application. Along with the Application level management and monitoring, user can also leverage the integrated tooling mentioned below:

Start/Stop the Functions

In Turbo360, User can perform remote actions like Start/ Stop the Function App or Functions and Restart the Function App. Being in Turbo360 itself, user does not need to switch between Azure portal and Turbo360 for these frequently carried out actions.

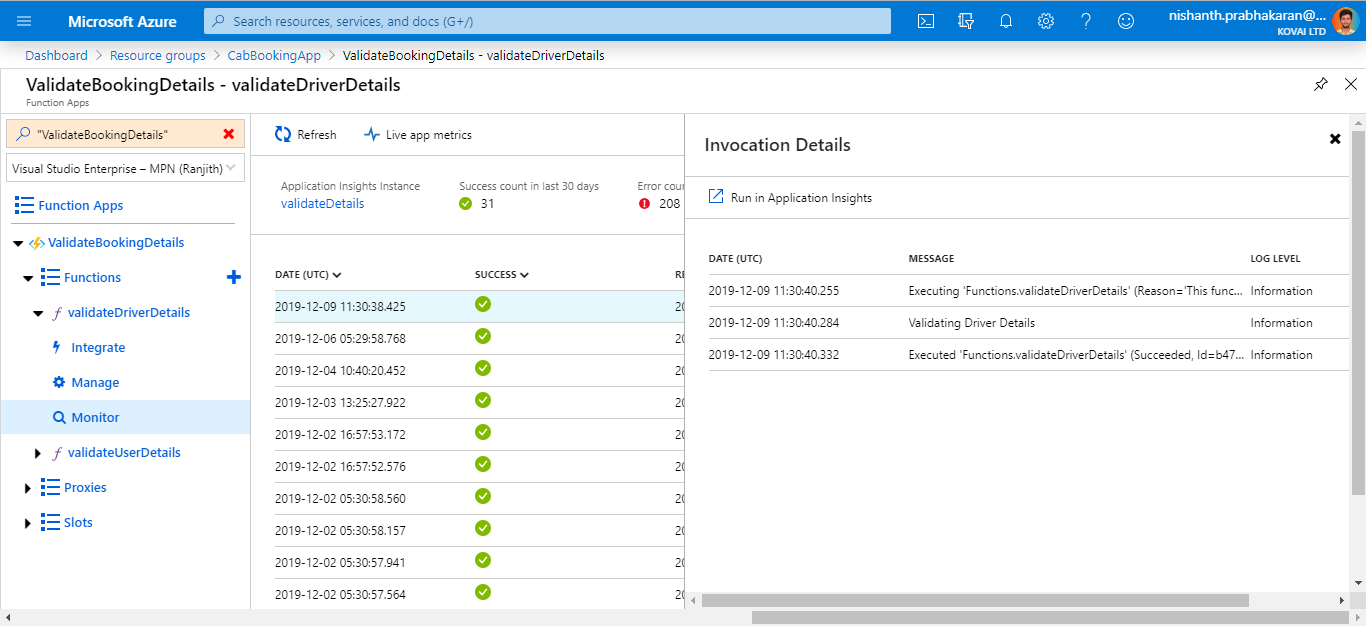

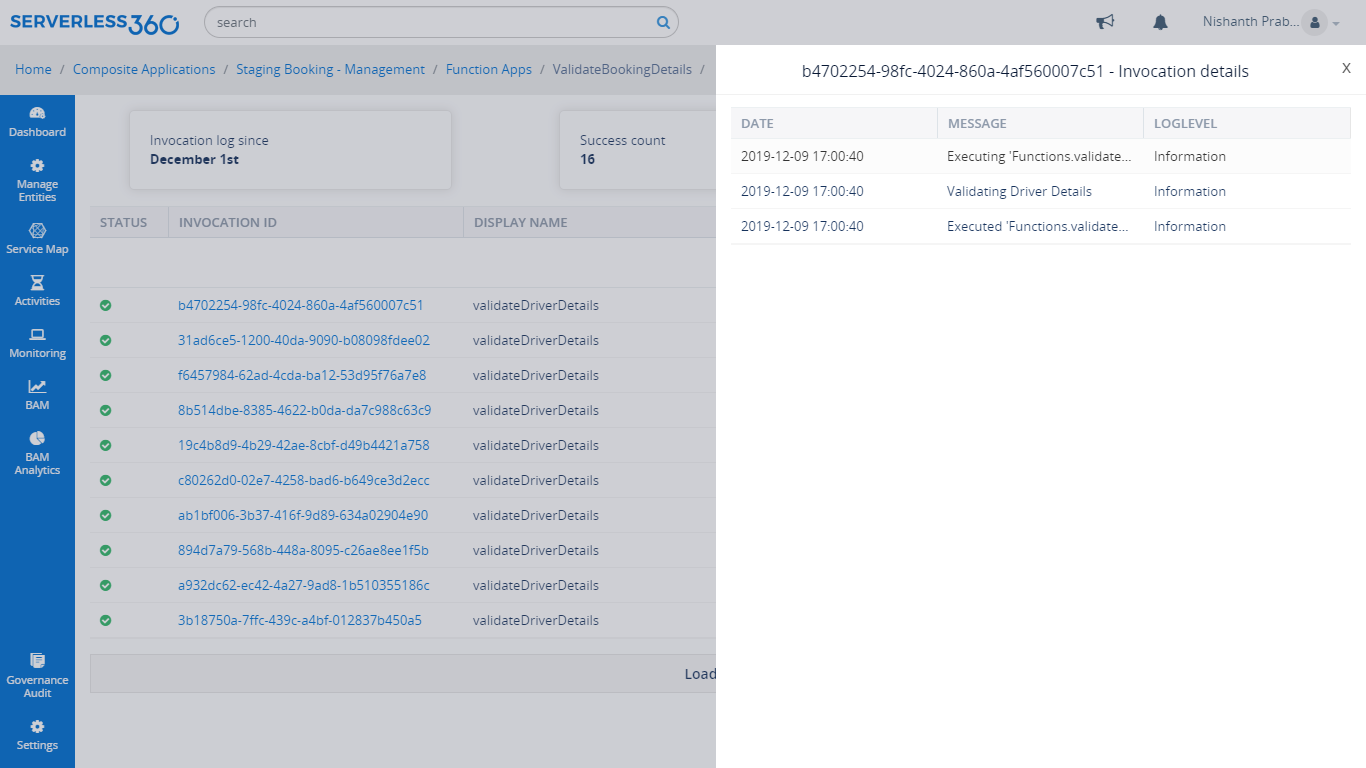

View Invocation Logs

From Turbo360, user can investigate on the issue in the Azure Function Execution by viewing invocation logs. It also provides the success count, Error count and filtering for faster retrieval of the Azure Function Execution. The Invocation Logs can be retrieved for both 1.x and 2.x versions.

View Properties of the Azure Functions

User can also get to view the properties like App state, Region, Operating System and Hosts of the Azure Function App and properties like type, direction and methods of Azure Functions.

Monitor Azure Functions in Turbo360

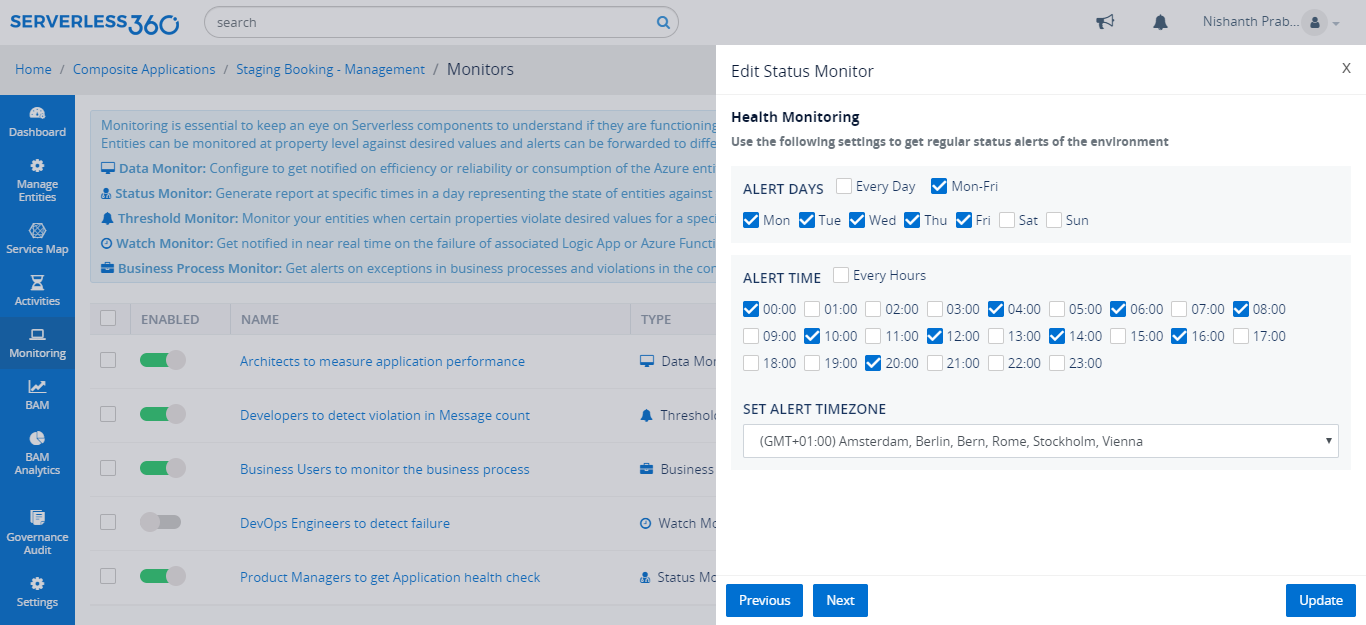

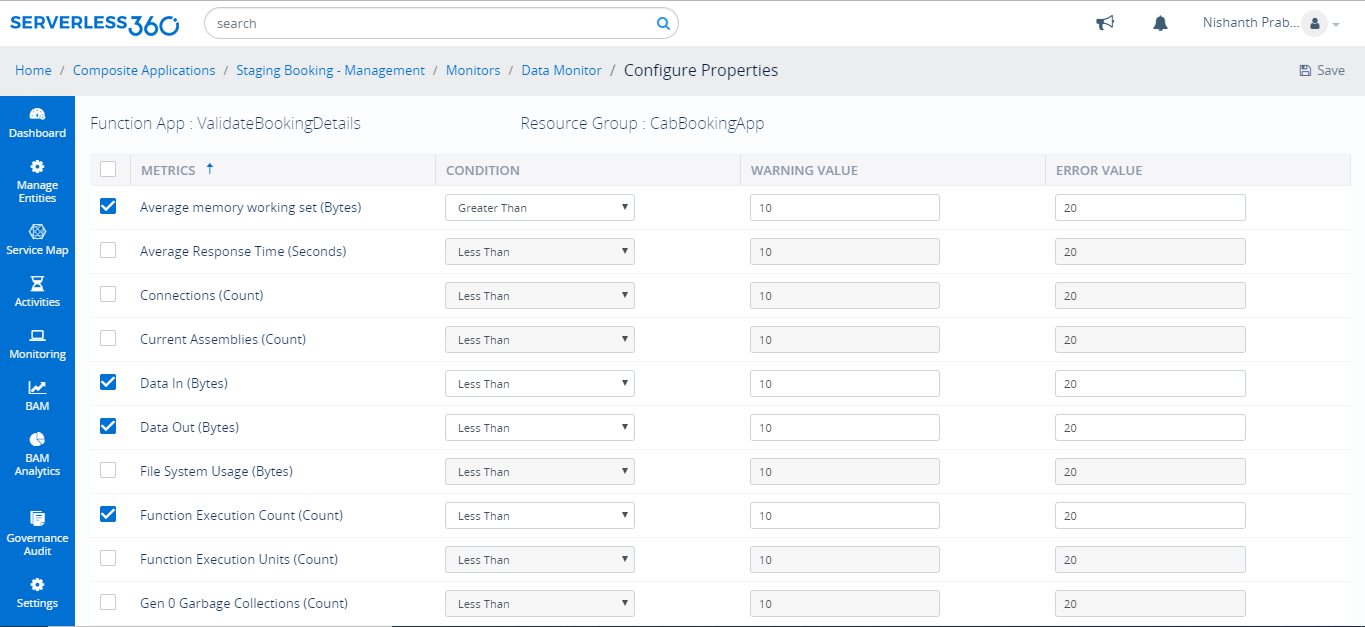

Though Azure provides monitoring solutions like Azure monitor and Application Insights, user cannot monitor multiple entities with various metrics. Whereas, with monitors in Turbo360, it is possible to monitor multiple entities based on metrics in Application level. Below are the types of monitors with which user can monitor Azure services in various perspectives.

State Monitoring

Consider when the user needs to get the alert report of health status of all the entities in the business application, every two hours or at the end of day, there is a need for a specialized monitor. With Status monitor in Turbo360, user can monitor the state of an entity that gives the health report of the Azure services. For this monitor, user can configure the number days in a week and number of hours in a day to get the alert report at the configured time.

Performance Monitoring

There would be a need to monitor the HTTP Responses of Azure Functions like Internal server errors (500), Successful responses (200) and so on. With the help of Data monitor in Turbo360, user can monitor Azure Function App on HTTP responses and on other extensive set of metrics.

It is also possible to monitor the number of function executions, Data in and Out, Average response time and more.

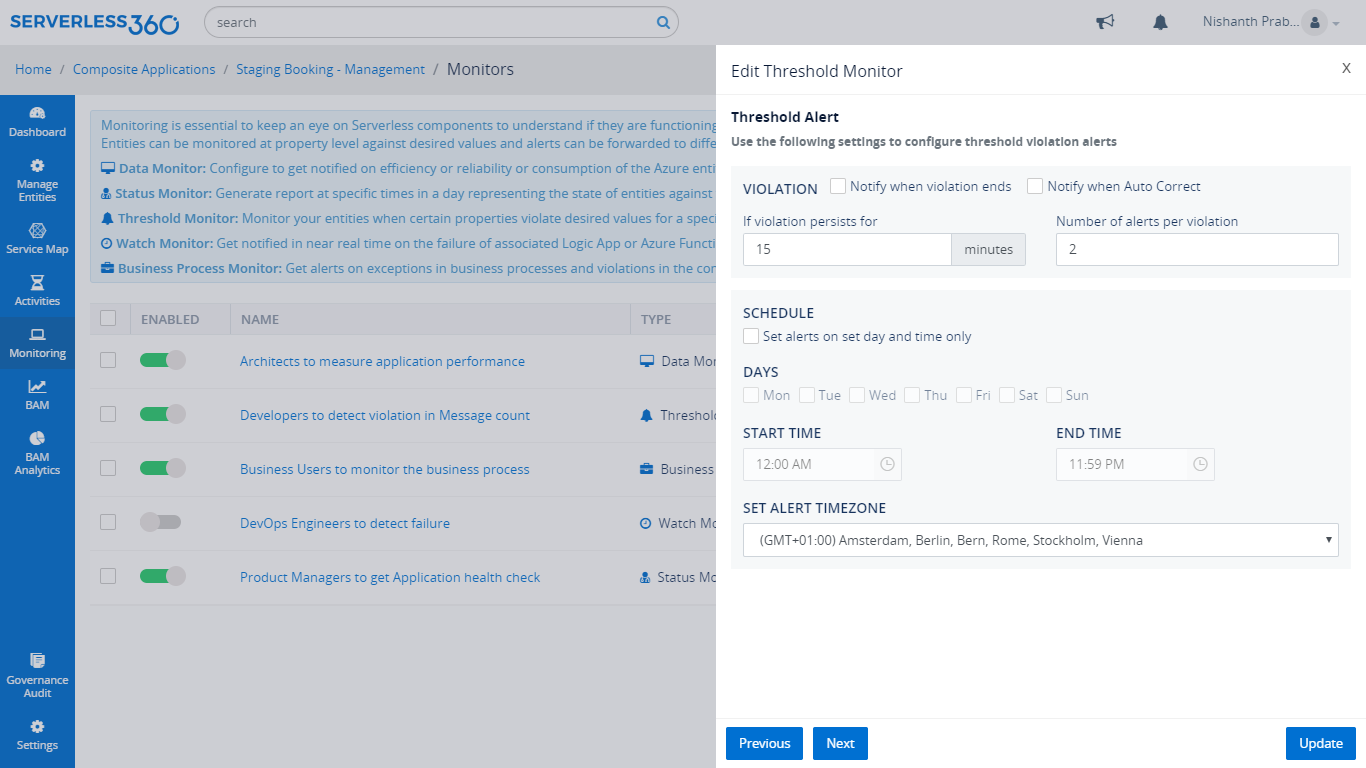

Violation Monitoring

Consider a scenario where a DevOps engineer needs to know the status of the entities only when there is a violation. With the Threshold Monitor in Turbo360, user will get the alert when the violation persists beyond defined minutes. Threshold monitor also provides the option to auto correct the state of the entities with which Turbo360 ensures the state of the Azure services are as expected.

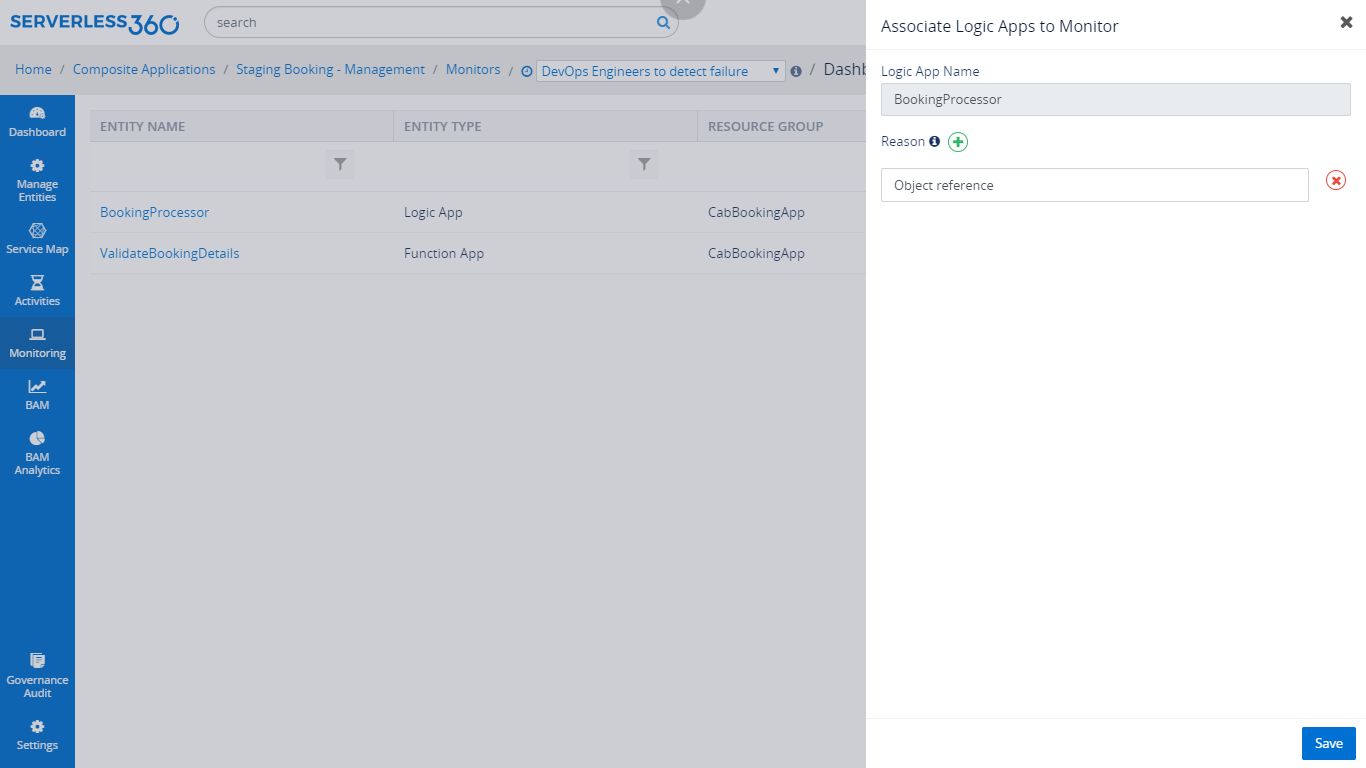

Failure monitoring

Consider a developer wants to get notified whenever there is a failure in the invocation of Azure Functions. With the help of Watch Monitor in Turbo360, user can have an eye on the Azure Functions for failure in their execution. It will send the alert report with the error reason whenever there is a failure in the Azure Function execution.

Business Activity Monitoring

Consider a business support person wants to find the errors/exceptions in their business process to correct and reprocess them. With the help of BAM in Turbo360, user can perform End to End tracking on the business process of Azure Serverless Applications that consists of Azure Functions.

Track Azure Durable Functions using BAM

If the need is to track Azure Functions or the custom web application, BAM in Turbo360 is the right choice. With BAM, user can track the message flowing through every stage in the transaction of a Business process built with Azure Functions. The Tracking of business process built with Azure Functions can be done with the .NET library of Turbo360 BAM. If it is other environment like JavaScript, F#, Java, user can leverage the exposed API of Turbo360 BAM to perform End to End Tracking. There are lot of advantages with the BAM like

- End to End Tracking of Business Process

- Tracking Custom Properties

- Error Detection

- Reprocessing Failed Business process

- Easy Instrumentation

Check Azure Functions monitoring feature page for more insights.

Turbo360 helps to streamline Azure monitoring, distributed tracing, documentation and optimize cost.

Sign up Now