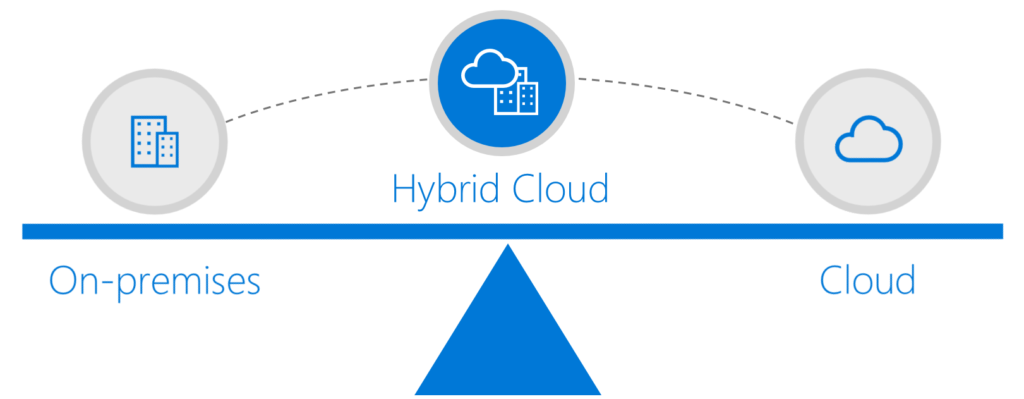

These days we see a lot of companies looking into Azure, however most of these companies already have a lot of applications running on premises. This means they will probably have legacy systems which can’t be moved, but still do want to expose the data of these systems. Also, you might not be allowed to move certain data to Azure due to the company or government regulations, or because your on-premises systems cannot (yet) work with Azure databases. Luckily Microsoft is aware of this as well, and is focusing heavily on hybrid integration, which means you can seamlessly integrate your on-premises systems with your cloud solutions.

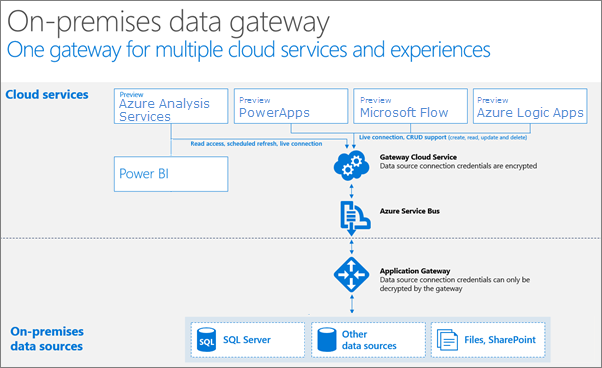

One of the solutions Azure offers for this is the On-premises data gateway, which allows us to connect our Azure solutions to our on-premises data sources like SQL Server, Oracle, SharePoint and more through a secure connection. For this, we install a gateway on the local machine, which is used by the Azure data gateway Cloud Service to send queries to the on-premises system, execute these queries, and send back the results.

In this post, I have an on premises SQL Server running with the Wide World Importers sample database. We will be using the on premises data gateway to use our local data in our Azure solutions, without having to move the database.

Install the On-Premises Data Gateway

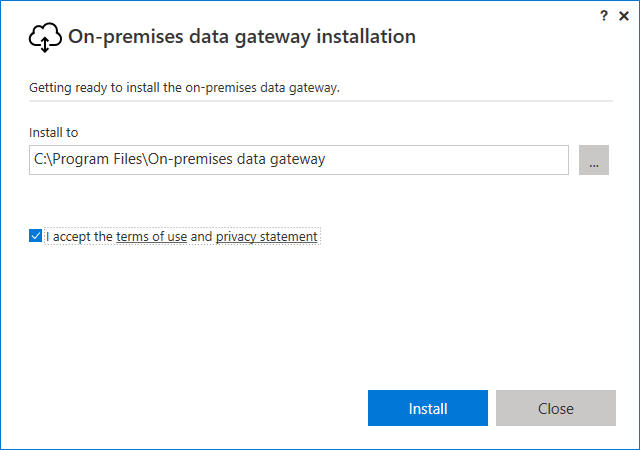

We will start by installing the on premises data gateway on our local machine. An easy to use installer is provided.

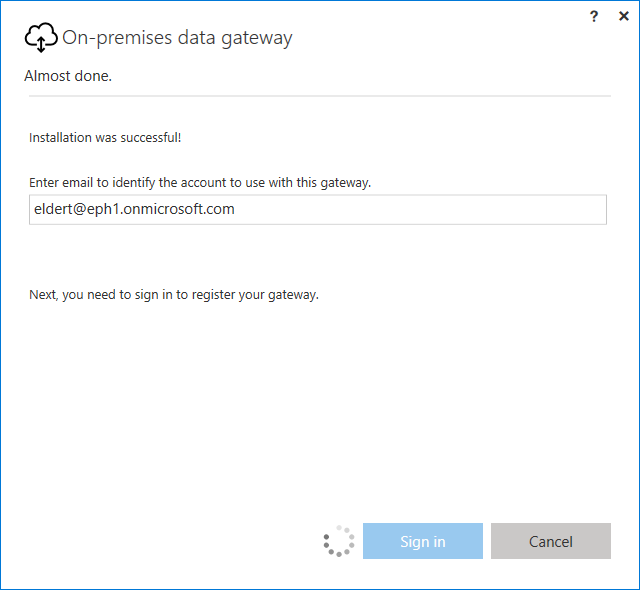

Once the gateway is installed, we need to provide the email which will be used to connect to Azure. This has to be a work or school email address. If you are using a Microsoft Account (e.g. outlook.com, live.com) you can use your Azure account to create a work or school email address by following these steps, which will show you how to create an organizational account in your Azure Active Directory.

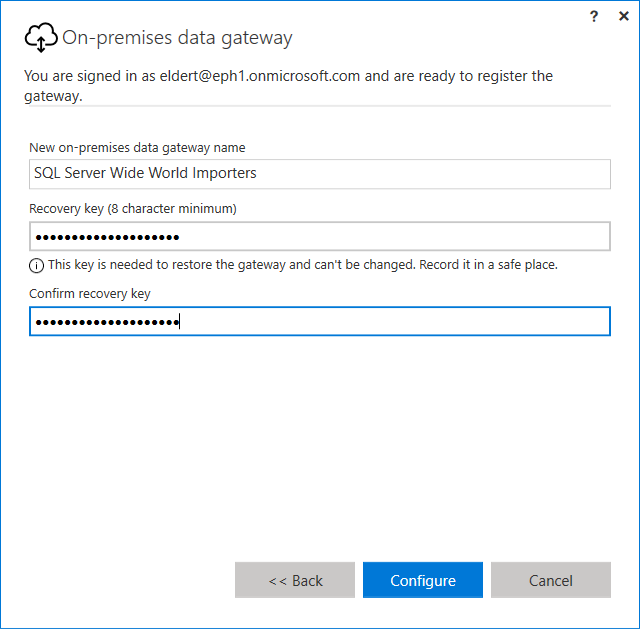

Once signed in, choose to register a new gateway. Do note you can only install one gateway per machine. Give the gateway a descriptive name, and make sure to save your recovery key in a secure place.

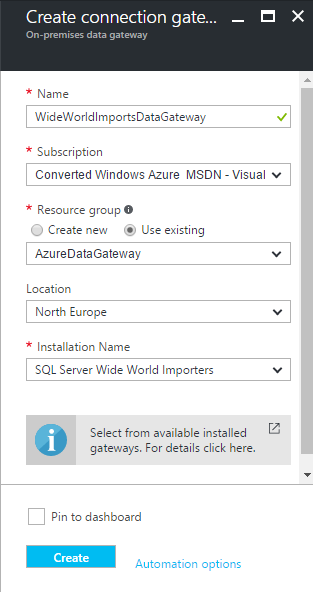

Create Azure Data Gateway Resource

Now that we have finished installation of our local gateway, we will register it in Azure as well. In the Azure portal, go to the On-premises Data Gateways blade. Choose to add a new gateway, give it a descriptive name, and make sure to select the On-premises gateway installation we have just installed.

Logic Apps

As we have our on-premises data gateway completely in place, we can start using it from our Azure solutions. The on-premises data gateway can be used from various parts in Azure, like PowerBI, PowerApps, Flow and Logic Apps. For this post, I will show how we can use, retrieve and update data on our local database from a Azure Logic App.

Retrieve Records

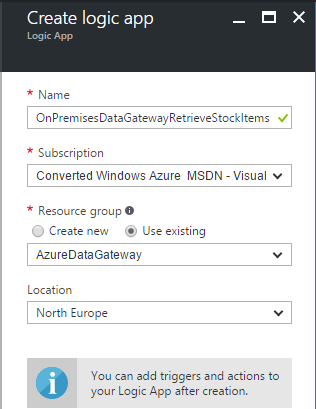

We will start by retrieving records from our local database. For this, we will create a new Logic App, which will receive an HTTP request, use the On-premises data gateway to retrieve all records from the StockItems table, and return these to the caller.

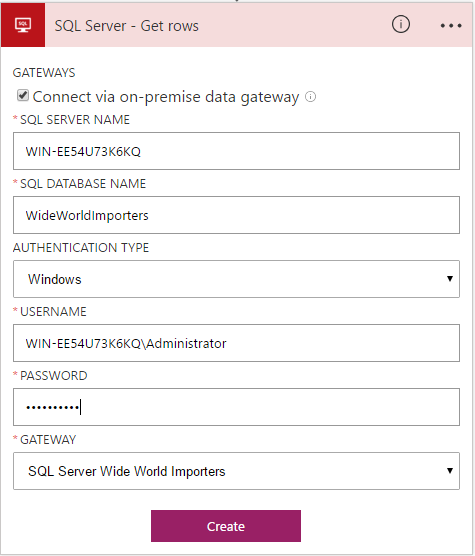

To communicate with our local database, look for the SQL Server actions, in this case the SQL Server – Get rows action. As this is our first time connecting to the On-premises data gateway, we will have to create a new API connection. To do this, you can check the option to connect via our On-premises data gateway, and make sure to select the gateway we just created. The SQL Server Name is the local name of the server, and make sure to use a fully qualified domain name format for the username, even if you are using a local user.

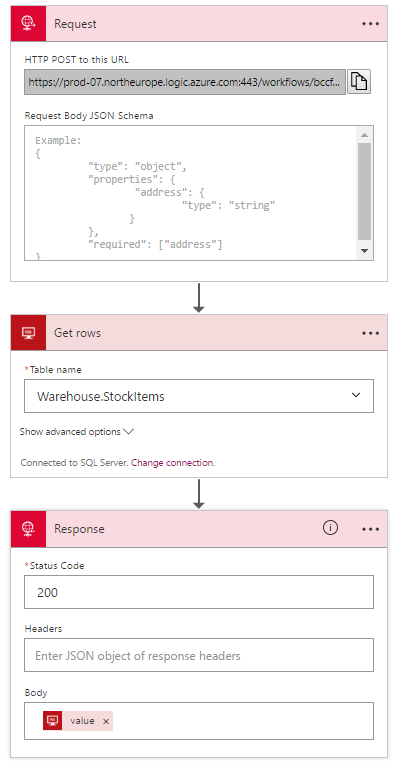

Now that we have our API connection in place, we will be able to use this from our other Logic Apps as well. We can now configure our Logic App to retrieve the records from the StockItems table, and return these in the response to the caller. When selecting the Table Name field, you will notice that the On-premises data gateway retrieves all the tables from our local database for you, and you can simply pick the table which you want to work with.

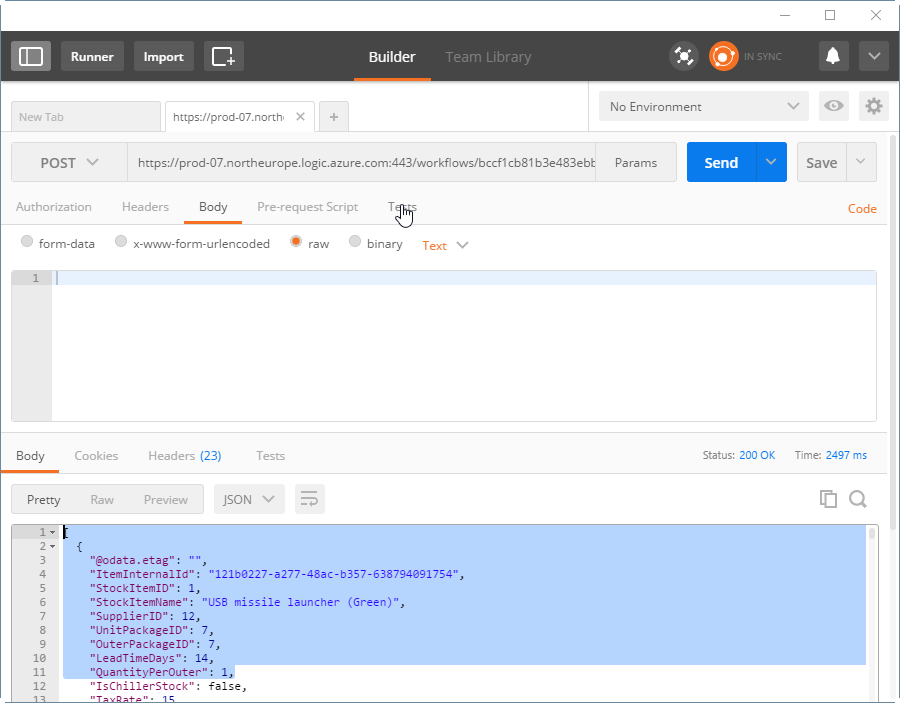

To test our flow, we can use an application like Postman. Copy the generated URL from the Request trigger in our Logic App into Postman, set the HTTP verb to POST and specify a body if needed, and press Send. You will now see the records, retrieved by our Logic App from our local database, through the On-premises data gateway.

Update Record

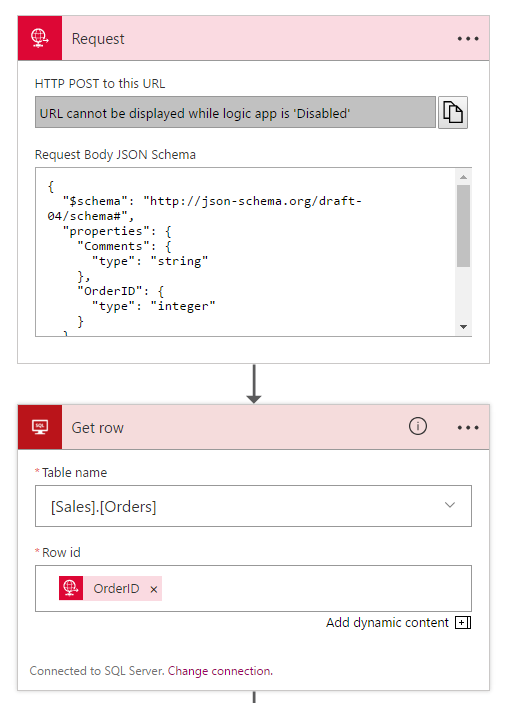

Next, let’s see how we can manipulate existing data in our database. We will start by defining a json-schema on the request trigger, which can be generated from http://jsonschema.net. By setting this schema here, we will be able to use the contents from our incoming message as dynamic content when setting properties in the following actions. For the SQL connection, we can re-use the API connection we created in the previous step. The format that will be used in this request is as following, allowing us to add a comment to an existing order.

{

“OrderID”:1,

“Comments”:“My updated sample order”

}

To update the order, we will first get the current order, so we can set the current values in all fields except for the Comments field, which will be updated with the data we received in the request calling the Logic App.

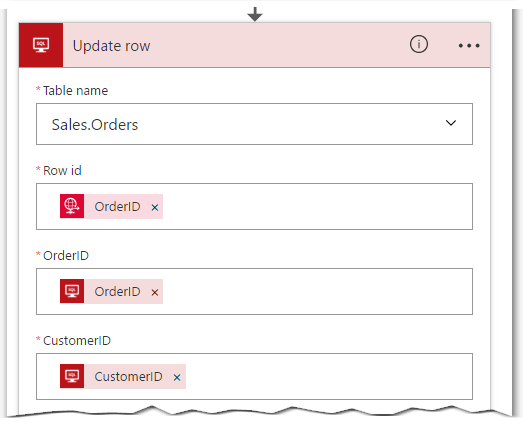

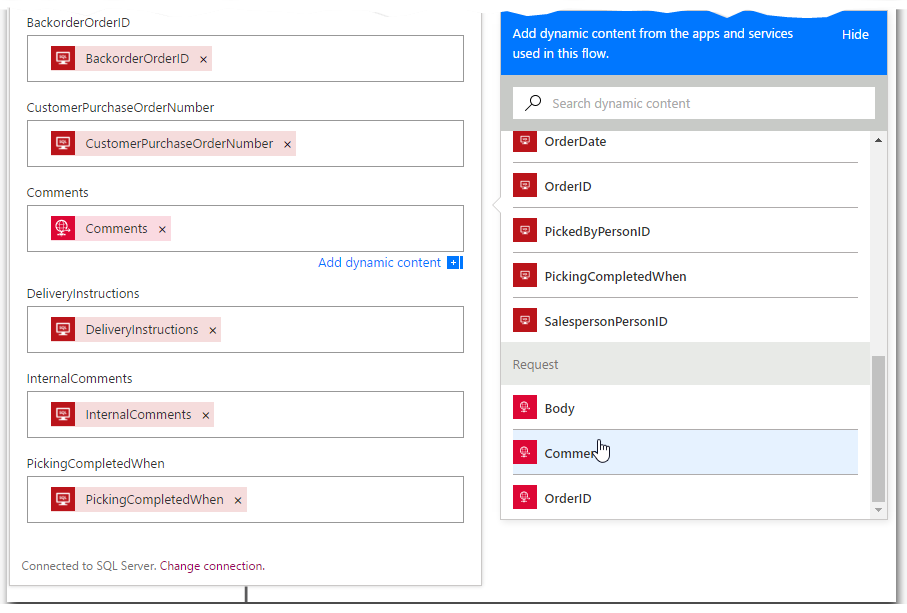

Now that we have the data of the current order, we will update the order. Insert an Update Row action, and set all fields with the data we received from the Get row action, except for the Comments field, which we will use from the incoming request.

Now use Postman to post a message to the endpoint which was generated for this Logic App, and use the message specified earlier. The order in our database will now get updated with the new comments we sent in using Postman.

Conclusion

As we have seen here, it is very easy to expose our local data to our Azure solutions, in a secure way, and with minimal time needed. This gives us the option to move towards Azure, without the need to migrate all our data or applications, giving us the best of both worlds. In my opinion, this hybrid way of integration will be the way to go forward, as it gives companies the opportunity to move to Azure at their own pace, keeping those legacy systems they need on premises, while getting the added value of Azure when it comes to speed of deliverability and scalability.

Also, check out Introducing Azure Logic Apps Integration Service Environment (ISE) blog.